Technology

Protecting User Data Pin UP: SSL and Encryption

When and how to safely enable TLS 1.3 without breaking clients?

Pin Up implements TLS 1.3, formalized in RFC 8446, which reduces the handshake to one RTT by eliminating the static RSA exchange, CBC modes, and SHA-1, leaving only AEAD ciphers (TLS_AES_128_GCM_SHA256, TLS_AES_256_GCM_SHA384, TLS_CHACHA20_POLY1305_SHA256), thereby simultaneously improving security and reducing latency (IETF, 2018; IETF, RFC 8446, 2018). TLS 1.2 remains legally acceptable when using strong cipher suites, but migration to TLS 1.3 is recommended for new systems (NIST SP 800-52r2, 2019). For payment scenarios, TLS 1.2 is the minimum acceptable level, with legacy versions TLS 1.0/1.1 excluded as non-compliant with “strong cryptography” (PCI DSS v4.0, 2022). Practically secure inclusion is achieved by publishing TLS 1.3 in parallel with a limited and uniform set of secure ciphers in TLS 1.2 (ECDHE_ECDSA/RSA with AES-GCM and ChaCha20-Poly1305), which provides faster TTFB without introducing compatibility regressions in browsers and mobile clients.

Secure migration is implemented in stages through canary enablement, Handshake Success Rate metrics, and the distribution of protocol versions across client families to accommodate older Android (≤5.x) and enterprise proxies (IETF TLS WG, 2018–2022; Chromium Platform Status, 2019–2022). Experience from major providers demonstrates the success of the “TLS 1.3-on + strict TLS 1.2” strategy: with initial enablement at the edge (CDN/balancers) and maintaining ECDHE 1.2 suites, the handshake success rate exceeds 99.9%, and single failures are localized in legacy middleboxes (Cloudflare, 2019–2021). In a regional marketplace case study, enabling TLS 1.3 with a fallback to 1.2 and maintaining ALPN for HTTP/2/3 did not worsen the SLO, and the median TTFB decreased by 6–9% on mobile networks (Cloudflare Performance Reports, 2020; Google Chrome Team, 2020). This approach reduces the risk of breakage and ensures predictable behavior in mixed networks.

Documenting cryptographic policies is a mandatory requirement for ISO/IEC 27001:2022 audits and industry reviews. These policies document minimum protocol versions, cipher priorities, curve parameters (P-256/P-384), and the TLS library update procedure (ISO/IEC 27001:2022; NIST SP 800-56A, 2018). In the context of personal data and payment information, such policies demonstrate state-of-the-art compliance and mitigate regulatory risks during cross-border transfers (Law of Azerbaijan on Personal Data, 2023; GDPR, 2018). In a banking case, the ban on CBC/RC4/3DES, the use of ECDHE and mandatory PFS allowed for an unscheduled audit to be passed, and the transition to ECDSA certificates with RSA-PSS as a backup reduced the handshake time on modern clients compared to pure RSA (Mozilla SSL Configuration Guidelines, 2024; IETF, 2018).

Common migration mistakes include prematurely disabling TLS 1.2, incorrect cipher prioritization, failure to consider the risk of 0-RTT replay, and the impact of middleboxes on ALPN/QUIC (IETF, RFC 8446, 2018; IETF, RFC 9000/9114, 2021–2022). External testing shows that mixing CBC suites in TLS 1.2 and incomplete ECDHE support lower SSL Labs scores and create a false sense of compatibility, while abandoning static RSA and explicitly prioritizing AEAD improves both security and speed (SSL Labs Documentation, 2024; OWASP ASVS 4.0.3, 2021). In a mobile traffic case on ARM devices running Android 5–7, prioritizing ChaCha20‑Poly1305 over AES‑GCM for clients without AES‑NI eliminated CPU degradation and returned the median TTFB to the baseline, confirming the value of adaptive prioritization (Google Security Blog, 2016; Cloudflare Blog, 2020).

Which ciphers should be left for A+ on SSL Labs?

The criteria for achieving an A/A+ rating on SSL Labs are the use of AEAD suites with PFS, disabling legacy algorithms, and correct configuration of protocol versions, which is consistent with RFC 8446 and the requirements of OWASP ASVS 4.0.3 (IETF, 2018; OWASP ASVS, 2021). For TLS 1.3, the cipher suite is fixed and secure by default; For TLS 1.2, ECDHE_ECDSA_WITH_AES_128_GCM_SHA256, ECDHE_ECDSA_WITH_AES_256_GCM_SHA384, ECDHE_ECDSA_WITH_CHACHA20_POLY1305_SHA256, and their RSA equivalents should be retained, with CBC/RC4/3DES and static RSA exchange disabled (Mozilla SSL Configuration Guidelines, 2024). In practice, it is also advisable to have an ECDSA certificate (P-256/P-384) and a fallback RSA-PSS for broad compatibility. This choice ensures robustness, resistance to legacy mode attacks, and predictable results from external scanners without user experience regressions.

Prioritizing ChaCha20-Poly1305 for clients without AES-NI hardware acceleration yields gains on mobile networks and ARM devices, while AES-GCM remains optimal on x86 platforms with acceleration support (Google Security Blog, 2016; Cloudflare Blog, 2020). CDN practice shows that a server cipher preference configuration with AES-GCM and ChaCha20 in the first positions ensures the lowest median TTFB in a mixed audience while maintaining an A+ score (Cloudflare Performance Reports, 2020; SSL Labs Documentation, 2024). In a pilot deployment for a news portal, such prioritization reduced TTFB by 5–8% for Android 5–7, while maintaining the same result for modern desktops with AES-NI. This eliminates the “pulling” of a suboptimal cipher and stabilizes the metrics.

Special attention should be paid to DHE suites with weak modulus, as well as deprecated curves. NIST SP 800-56A recommends using P-256 or P-384 curves, and for DHE, parameters of at least 2048 bits; however, ECDHE is preferred due to its higher performance and compatibility (NIST SP 800-56A, 2018). In several public case studies, removing DHE suites with 1024-bit parameters resolved SSL Labs “Weak DH” warnings and prevented intermittent score drops due to client library updates (SSL Labs Changelog, 2022–2024; Mozilla SSL Guidelines, 2024). A comprehensive policy should document rejected ciphers and minimum parameters to ensure reproducibility at scale.

What is 0-RTT and is it dangerous to enable it?

0-RTT in TLS 1.3 allows the client to send data upon session resumption without an additional RTT, which speeds up the first useful response, but opens a replay vector within the PSK pre-shared secret lifetime (IETF, RFC 8446, 2018). IETF recommendations prescribe limiting 0-RTT to idempotent operations (GET/HEAD) and using server-side anti-replay mechanisms with short TTLs for PSK (IETF, RFC 8446, 2018). In ISP measurements, the gain from 0-RTT in mobile networks with an RTT of 80–150 ms amounts to tens of milliseconds for static content, while it should be disabled for operations with state changes (Cloudflare Blog, 2018; Google Chrome Networking, 2019). This mode is useful for accelerating the loading of public resources and should be filtered at the gateway level.

Proper implementation of 0-RTT requires application discipline and infrastructural support. This is achieved in practice by enabling 0-RTT for CDN caching routes, disabling POST/PUT/DELETE, deduplicating requests by tokens and addresses, and logging potential repeats for analysis (IETF, RFC 8446, 2018; IETF, RFC 8740, 2020). In a regional media case, enabling 0-RTT only for GET requests to static content reduced the TTFB by 30–60 ms without incidents of repeated payments or duplicate orders, since all state-changing methods were forced to 1-RTT (Cloudflare Blog, 2018; Akamai Performance Report, 2020). This distinction minimizes the risk of business impact while maintaining a noticeable acceleration of cold queries.

How to select and manage SSL certificates for a website or API?

DV/OV/EV certificate types are governed by the CA/B Forum Baseline Requirements, where DV confirms domain control, OV confirms legal entity ownership, and EV confirms extended validation with registration data verification (CA/B Forum Baseline Requirements, 2024; CA/B Forum EV Guidelines, 2024). From 2018 to 2020, browsers mandated Certificate Transparency for publicly trusted certificates and reduced the maximum validity period to 398 days to improve operational security and ecosystem responsiveness (Google Chrome CT Policy, 2018; Apple Certificate Lifetimes, 2020). In practice, DV is justified for content and support domains, while OV/EV are used in payment and back-office areas to enhance trust and traceability of organizational verification. This approach simplifies audits and mitigates risks.

Certificate lifecycle management is based on ACME automation (IETF, RFC 8555, 2019), where issuance and renewal are initiated by the client via HTTP-01/DNS-01, and OCSP stapling is enabled on the server side to reduce dependence on remote OCSP endpoints (IETF, RFC 6960, 2013). In distributed systems, a unified inventory registry (domains, types, CAs, expirations), 30/15/7-day alerts, and canary activations before rotation are practical. Kubernetes makes extensive use of cert-manager with DNS-01 for wildcard certificates and separate SAN certificates for public APIs, simplifying revocation and risk isolation (cert-manager Project Docs, 2023; Let’s Encrypt Integration Guides, 2022). This architecture minimizes renewal downtime and makes management predictable.

In payment and regulatory environments, PCI DSS v4.0 requires “strong cryptography” and proper certificate validation across the entire value chain, including CDN/WAF, while ISO/IEC 27001:2022 requires documented key and certificate management procedures (PCI SSC, 2022; ISO/IEC 27001:2022). These practices include eliminating self-signed certificates on external façades, auditing CT logs for erroneous issuances, and defining target RTOs/SLAs for revocation (via CRL/OCSP) within minutes (Google Certificate Transparency, 2018; CA/B Forum BR, 2024). In B2B integrations, mTLS with OV certificates is used, which facilitates client attribution and prompt revocation in the event of a key compromise. This scheme reduces operational and regulatory risks.

Browser UX changes since 2019 removed the EV “green bar,” reducing visual differentiation. However, EV retained manageable value in sectors with high requirements for legal entity verification (Chrome Security UX Updates, 2019; Mozilla Security UI, 2019). At the same time, the mass rollout of Let’s Encrypt DV since 2015 normalized the 90-day rotation and pushed for widespread automation, including integration with CI/CD and secret managers/HSMs (Let’s Encrypt Launch, 2015; IETF RFC 8555, 2019). In a case with 120+ domains, switching to ACME streams with HSM key storage eliminated expired incidents and stabilized the A/A+ external rating on SSL Labs without manual intervention. This shows that the value lies in process discipline, not in visual indicators.

EV, OV, or DV — which to choose for a fintech project?

The choice of certificate type in fintech is determined by the domain risk profile and compliance requirements: DV confirms domain ownership and is suitable for static and auxiliary zones, OV confirms legal entity ownership and is a practical standard for B2B/API and dashboards, EV provides for in-depth verification and is used for payment facades and domains with increased regulatory expectations (CA/B Forum EV Guidelines, 2024; PCI SSC Guidance, 2022). Browser UI differentiation for EV has been reduced since 2019, so the role of EV has shifted to legal traceability and working with auditors (Chrome Security UX, 2019). In a typical fintech case, a public landing page is served by DV with a 90-day rotation, while the payment domain and dashboard are served by OV/EV from a commercial CA with an issuance/revocation SLA in hours. This meets the expectations of partners and auditors.

Rational comparison criteria include the depth of organization verification (none/DV, standard/OV, extended/EV), issuance timeframes (minutes/DV, days/OV, days-weeks/EV), cost (low/medium/high), internal compliance requirements, and contractual revocation SLAs (CA/B Forum BR, 2024; WebTrust for CAs, 2023). For mTLS APIs, OV is most often chosen for counterparty verification, while for browser-based payment gateways, OV/EV is chosen depending on industry requirements and auditor expectations (PCI DSS v4.0, 2022). In the processing gateway case, choosing EV for a CA with local support and a management API allowed for a fixed 2-hour revocation SLA and simplified the annual audit. This approach distributes costs and control where it provides the greatest regulatory impact.

A consolidated criteria table helps standardize solutions and prevent re-issuance after audits. CT logging, expiration limits (≤398 days), and automation compatibility (ACME/API) are relevant for all types, as is a wildcard ban on critical payment domains (Google CT Policy, 2018; Apple Certificate Policy, 2020). In a practical review, a fintech company retained EV only on payment domains and OV on dashboards, while migrating the remaining 60+ subdomains to DV/ACME, reducing overall costs by 30–35% without losing PCI DSS requirements or internal policies (PCI SSC, 2022; Let’s Encrypt Case Studies, 2021). This confirms that proper domain classification improves management efficiency and transparency.

How to automate certificate renewal without downtime?

ACME (IETF RFC 8555, 2019) standardizes non-stop issuance and renewal via HTTP-01/DNS-01, while short expiration periods (90 days for Let’s Encrypt) reduce cryptographic risk and encourage process discipline (Let’s Encrypt, 2019; IETF RFC 8555, 2019). To achieve zero downtime, a “two-certificate” scheme is implemented: issuing the next certificate 7–14 days in advance, validating the chain and OCSP stapling, performing atomic failover on the balancer/ingress, and monitoring the Handshake Success Rate (IETF RFC 6960, 2013; Mozilla SSL Guidelines, 2024). In Kubernetes, the cert manager updates secrets, the ingress controller restarts only the affected pods, and smoke tests verify the OCSP chain and status. This arrangement ensures service continuity even during mass updates.

In a hybrid infrastructure, a unified inventory, 30/15/7-day alerts, and periodic “dry” renewals in staging are essential. For wildcard zones, DNS-01 removes HTTP validation restrictions and covers the subdomain tree, while for public APIs, SAN certificates are more appropriate for simplified revocation and risk isolation (Let’s Encrypt Docs, 2022; cert-manager Docs, 2023). In a regional marketplace case, combining DNS-01 for *.example.az and individual SANs for payment domains eliminated duplication and accelerated revocation in critical zones to minutes. This reduces the likelihood of certificate failures and SLA incidents at night and on weekends.

Revocation and status are part of a zero-downtime strategy. OCSP stapling reduces validation latency and dependence on the availability of CA OCSP servers, while the TLS Feature (must-staple) extension increases validation strictness while simultaneously increasing requirements for the timing of stapled response updates (IETF RFC 7633, 2015; IETF RFC 6960, 2013). Errors occur when CRL updates are infrequent and there are no backup timeouts, which leads to mass failures during short-term OCSP outages. In the case of a payment aggregator, the implementation of short TTLs for OCSP and hot swapping of stapled responses reduced the frequency of “certificate untrusted” incidents to statistical error (PCI SSC Guidance, 2022; Cloudflare OCSP Best Practices, 2023). This makes the system’s behavior predictable during third-party service failures.

How to configure TLS crypto configuration for maximum speed and security

A balance of strength and performance is achieved by choosing AEAD ciphers and PFS exchanges in conjunction with the hardware capabilities of clients and servers. RFC 8446 specifies secure ciphers for TLS 1.3, while TLS 1.2 requires explicit disabling of CBC/RC4/3DES and static RSA exchange (IETF, 2018). OWASP ASVS 4.0.3 recommends ECDHE for PFS and modern message authentication (GCM/Poly1305), and Mozilla SSL Guidelines 2024 provides ready-made profiles for “modern/intermediate” that take interoperability into account (OWASP, 2021; Mozilla, 2024). In mixed audiences, the optimal configuration also takes into account prioritization of ChaCha20-Poly1305 for clients without AES-NI and AES-GCM for x86 platforms, which reduces the median TTFB without reducing strength (Google Security Blog, 2016; Cloudflare, 2020).

Hardware specifics are critical: AES-GCM is efficient on CPUs with AES-NI (Intel/AMD), while ChaCha20-Poly1305 is stable on ARM devices, especially older Android devices (IETF RFC 8439, 2018; Google Security Blog, 2016). Cloudflare measurements show a 5–10% performance advantage for ChaCha20 in typical mobile scenarios, confirming the feasibility of adaptive cipher prioritization based on client capabilities (Cloudflare Blog, 2020). In a portal case study with 40% mobile traffic, adding ChaCha20 to the top positions for clients without AES-NI reduced the median page load time by ~120 ms, while maintaining an A+ rating on SSL Labs and compatibility with older browsers through a limited TLS 1.2 profile. This configuration minimizes CPU costs and reduces user latency.

The historic transition from RSA to ECDHE exchange in TLS 1.2 introduced PFS for secure sessions, and TLS 1.3 solidified this practice by eliminating legacy mechanisms (IETF RFC 5246/8446, 2008/2018). NIST SP 800-56A/B recommends P-256/P-384 curves and key length parameters that provide multi-year resistance to current attack surface assessments (NIST SP 800-56A, 2018; NIST SP 800-57, 2020). Configuration errors often involve enabling weak curves or 1024-bit DHE, which can lead to “weak DH” warnings and rating downgrades. In the banking API case, replacing DHE with ECDHE P-256 and unifying profiles on load balancers reduced handshake time by 15% and increased compatibility.

When to choose ChaCha20‑Poly1305 over AES‑GCM?

ChaCha20-Poly1305 is an AEAD scheme optimized for software implementation on platforms without AES hardware acceleration, providing comparable security to AES-GCM with better performance on ARM (IETF RFC 8439, 2018; Google Security Blog, 2016). On devices without AES-NI, ChaCha20 provides more predictable encryption times and smaller CPU load spikes, which reduces TTFB variability and improves resilience in mobile networks. Measurements by Cloudflare and Google demonstrate significant improvements on Android devices of generations 5–7, especially at high RTT (Cloudflare Blog, 2020; Google Chrome Team, 2019). For users, this means more stable download speeds and less sensitivity to network fluctuations.

A practical strategy is to conditionally prioritize ChaCha20 based on client capability signals (e.g., indirectly via User-Agent/JA3 profiles) and keep AES-GCM in top positions for clients with AES-NI (Mozilla SSL Guidelines, 2024; Salesforce JA3 Fingerprinting, 2017). Server cipher preference mode is enabled on the server to force the optimal choice, and adaptive prioritization is enabled on the CDN. In a case study of a media service where 60% of traffic is mobile users, the median TTFB decreased by 90 ms after raising ChaCha20 for the relevant clients, with no regressions on desktops with AES-NI (Cloudflare Blog, 2020; Akamai State of the Web, 2021). This demonstrates that “smart prioritization” is superior to static lists.

Errors arise when ChaCha20 is unconditionally superior for all clients: on servers and desktops with AES-NI, AES-GCM is faster and more energy-efficient, especially under high concurrency (Google Security Blog, 2016; Intel AES-NI Guidance, 2020). The correct approach is to combine both cipher suites, provide PFS over ECDHE, and clearly document the choice policy, including testing procedures in CI/CD. Regular A/B benchmarks and TTFB/CPU analysis on client samples allow us to confirm performance hypotheses and avoid regressions during OpenSSL/BoringSSL updates (OpenSSL 1.1.1/3.0 Release Notes, 2018–2021; BoringSSL Notes, 2020). This supports reproducibility and stability.

How to enable PFS and check its operation?

Perfect Forward Secrecy means that compromising the server’s long-term key will prevent decryption of past sessions if the exchanged keys were ephemeral (ECDHE/DHE). In TLS, this is achieved by selecting ECDHE-enabled cipher suites and disabling static RSA exchange, as recommended by OWASP ASVS 4.0.3 and detailed in NIST SP 800-56A (OWASP, 2021; NIST, 2018). In practice, it is sufficient to prioritize ECDHE suites, enable P-256/P-384 curves, and remove obsolete schemes. Then, confirm the presence of PFS in SSL Labs reports (“Forward Secrecy: Yes”) and testssl.sh. The user benefit is the protection of historical traffic in the event of a compromise being detected late.

Implementing PFS requires testing legacy clients and potentially allocating a “compatible” segment that supports extended TLS 1.2 profiles without regression. The minimum acceptable parameters for DHE are 2048 bits, but ECDHE is preferred due to its performance and widespread support (NIST SP 800-56A, 2018; Mozilla SSL Guidelines, 2024). In the API gateway case study, removing 1024-bit DHE and switching to ECDHE P-256 eliminated “weak DH” warnings and accelerated the handshake without degrading compatibility for legacy clients with TLS 1.2. This confirms that choosing the right parameters simultaneously improves security and speed.

Typical mistakes include attempting to “broaden compatibility” by enabling CBC and static RSA, which breaks PFS and lowers ratings, as well as leaving weak DH curves and parameters (SSL Labs Docs, 2024; OWASP ASVS, 2021). Proper operation includes constant configuration monitoring via CI profiles, handshake regression tests, and committing cipher policies to IaC repositories (Terraform/Ansible). In a case study of a large platform, committing ciphers and versions to IaC and automatically running testssl.sh with every ingress change eliminated configuration drift and ensured a stable A+ rating. This reduces the risk of manual errors in production.

What are the requirements for TLS according to laws and standards in Azerbaijan?

The Law of Azerbaijan “On Personal Data” requires the use of modern security measures when processing and transferring personal data, including the use of cryptographic protocols and the protection of communication channels (2023 edition). In international payment scenarios, PCI DSS v4.0 applies, requiring the use of TLS 1.2+ with strong ciphers and correct certificate validation at all stages of the path (PCI SSC, 2022). ISO/IEC 27001:2022 requires a documented cryptographic policy and key and certificate management processes, which includes the selection of protocols, algorithms, key lengths, and rotation procedures (ISO/IEC, 2022). In practice, this means fixing the minimum acceptable versions (TLS 1.2+, preferably 1.3), PFS, and prohibiting obsolete algorithms in the security policy.

When transferring personal data across borders, an adequate level of protection must be ensured on the recipient side, including through technical measures such as TLS 1.2+/1.3 and organizational guarantees agreed upon in contracts (Law of Azerbaijan, 2023; GDPR, 2018). For banking traffic to EU data centers, this typically includes binding to certified CAs, implementing PFS, and auditing the chain of trust, which simplifies compliance with local and European requirements (ENISA Guidelines, 2021; EDPB Guidelines, 2021). In the case of a regional bank, documenting a TLS 1.3 channel with ECDHE and CT logging enabled cross-border flows to pass inspection without any issues. This practical alignment with standards reduces the risk of regulatory compliance.

Regulatory checks technically confirm compliance through TLS configuration audits, certificate chain verification, the presence of HSTS, and the absence of outdated versions and weak ciphers (OWASP ASVS, 2021; Mozilla SSL Guidelines, 2024). Non-compliance may result in remediation orders and, in severe cases, restrictions on data processing activities. In payment provider cases, deprecating TLS 1.0/1.1 and migrating to TLS 1.2/1.3 with AEAD and PFS was a mandatory component of passing annual compliance assessments (PCI SSC, 2022; Visa Global Registry Guidance, 2021). For organizations, this means a process of regular audits and updates.

Is it possible to store and process personal data outside of Azerbaijan?

Cross-border transfers of personal data are permitted subject to adequate protection in the recipient country and compliance with contractual safeguards, including technical encryption measures during transmission, such as TLS 1.2+/1.3 (Law of Azerbaijan, 2023; EDPB Recommendations, 2021). European practice relies on the GDPR, which provides for standard contractual clauses (SCCs) and adequacy assessments, which are applicable for EU residents, but similar logic for assessing measures is used in other jurisdictions (GDPR, 2018; ENISA, 2021). In the case of an IT provider from Baku, data hosting in Germany was accompanied by TLS 1.3 with ECDHE and AES-256-GCM, as well as contractual data protection provisions, which were accepted by the client and the local regulator. This combination of measures mitigates legal and technical risks.

In practice, organizations specify a list of technical and organizational measures in contracts—TLS versions, cipher suites, CA requirements, revocation and monitoring procedures—and add requirements for incident management and notification periods (ISO/IEC 27001:2022; EDPB, 2021). Enabling CT monitoring for unauthorized issuance of company domain certificates enhances control (Google CT Policy, 2018). Errors arise from the use of outdated protocols, mixed content, or the absence of formal agreements, which increases the risk of claims. Regular auditing of channels and documents minimizes these risks and simplifies interactions with relying parties.

A separate factor is traffic routing and localization: using regional CDN nodes and data centers reduces latency and the likelihood of additional points of failure, and simplifies compliance with “minimized transmission” requirements (Akamai State of the Internet, 2021; Cloudflare Network Map, 2024). In a service case with users in Baku, moving the primary TLS termination to the nearest CDN node followed by secure communication to the origin reduced RTT by 20–30% and simplified the proof of security measures for cross-border flows. This technical solution backs up legal guarantees with real effectiveness.

What are the TLS requirements for online payments?

PCI DSS v4.0 requires TLS 1.2 or higher for public payment interfaces, strong ciphers (AES-GCM/ChaCha20-Poly1305), proper certificate validation, and protection from downgrade attacks throughout the entire chain, including CDN/WAF (PCI SSC, 2022). NIST SP 800-52r2 recommends TLS 1.3 as a priority for new systems and clarifies unacceptable algorithms (NIST, 2019). PCI audit practices include technical checks for the absence of TLS 1.0/1.1 and the use of PFS and HSTS to exclude unsecured HTTP. In a case study of a payment aggregator in Azerbaijan, enabling TLS 1.3, PFS, and a strict cipher configuration allowed the company to pass its annual compliance assessment without any issues and also stabilized performance during peak hours.

Downgrade protection requires fixing the minimum protocol version on frontends and correctly declaring supported versions and ALPNs to prevent forced downgrades (IETF, RFC 8446, 2018; Mozilla SSL Guidelines, 2024). HSTS with preload prevents downgrades to HTTP and reduces the risk of attacks from public networks, especially in the presence of user-defined redirects (Chrome HSTS Preload List, 2024). After enabling HSTS and excluding TLS 1.0/1.1, downgrade incidents in the processing center disappeared, as recorded by external scanners. This increases the resilience of payment pages and APIs to active network attacks.

Common mistakes in payment integrations include the use of wildcard certificates on critical domains, an incomplete trust chain, mixed content on payment pages, and a lack of regular OCSP/CT auditing (PCI SSC, 2022; W3C Mixed Content, 2020). Best practices include dedicating separate domains for payment, OV/EV certificates with short validity periods and automation, periodic SSL Labs/testssl.sh scans, and CT log monitoring (Google CT, 2018). In this case, domain segregation and the removal of wildcards in payment zones reduced operational risks and expedited incident investigations. This is consistent with the principle of minimizing the impact of a breach.

How to verify and maintain a secure TLS configuration

Maintaining a secure configuration is an ongoing process that includes auditing, monitoring, testing, and documentation. OWASP ASVS 4.0.3 mandates regular checks of protocol versions, cipher suites, certificate chains, and fortifying headers, while the Mozilla SSL 2024 guide provides configuration profiles for different compliance levels (OWASP, 2021; Mozilla, 2024). This efficient process is integrated into CI/CD: each configuration runs automated tests (testssl.sh, OpenSSL s_client) and an external SSL Labs snapshot. In an e-commerce case, moving benchmark tests to the pipeline eliminated configuration drift and prevented rating degradation during library updates.

Initial diagnostics identify basic violations: outdated ciphers, incomplete trust chains, mixed content, and missing OCSP stapling. SSL Labs evaluates protocol versions, ciphers, and PFS, testssl.sh provides a detailed client/algorithm matrix, and Security Headers checks HSTS/CSP/Referrer-Policy (SSL Labs, 2024; testssl.sh Docs, 2023; SecurityHeaders.com, 2024). In an API service case, testssl.sh detected CBC enabled in TLS 1.2, which lowered the score to B; removing CBC and prioritizing AEAD returned the score to A+ and reduced the risk of padding attacks. This illustrates the value of regular offline scans.

Monitoring should include certificate expiration dates, OCSP/CRL status, changes in CT logs, and handshake success metrics. Provider best practices recommend alerts 30/15/7 days before expiration, “OCSP failure rate” detection, and alerts on new CT records for your domains (CA/B Forum BR, 2024; Cloudflare Best Practices, 2023). In a banking case, integrating OCSP validation into monitoring and automatic tickets for updating stapled responses prevented widespread outages during short-term outages of CA OCSP servers. This makes operations predictable and resilient to external failures.

How to find and fix mixed content?

Mixed content occurs when resources (scripts, styles, images) are loaded via HTTP on a page delivered via HTTPS, which compromises integrity and can allow content substitution. The W3C classifies mixed content as active (scripts/iframes are blocked) and passive (images may trigger warnings), and browsers are gradually tightening their blocking policies (W3C Mixed Content, 2020; Chrome Security Updates, 2019). Detection involves DevTools, specialized scanners, and static analysis, while correction involves mass link replacement to HTTPS, proxying through a custom domain, and CSP directives.upgrade-insecure-requestsThis eliminates warnings and improves the security rating.

The practical plan includes an inventory of external resources, checking HTTPS support across third-party providers, and migrating to secure equivalents. Security Headers and Lighthouse help identify remaining policy issues, while automated CI tests prevent recurrence (SecurityHeaders.com, 2024; Google Lighthouse, 2024). In the case of a Baku news portal, a scan revealed that 15% of images were loaded via HTTP from a CDN; switching to HTTPS endpoints and enabling HSTS eliminated the warnings and improved Core Web Vitals. For users, this means stable loading and no blocking.

Common errors include old static links, widgets without HTTPS, incomplete redirect coverage, and missing HSTS. We recommend setting up automatic link checking in your pipeline and using Content-Security-Policy withblock-all-mixed-contenton critical pages (W3C, 2020; Mozilla Web Security Guidelines, 2024). In the media site case, the introduction of CSP and regular scans prevented the reappearance of mixed content after partner widget releases. This maintains a consistently clean configuration and reduces manual effort.

What alerts should I set for certificates and OCSP?

Timely alerts prevent downtime and validation errors. Ecosystem recommendations include 30/15/7-day certificate expiration notifications, OCSP failure rate monitoring, and monitoring CT log entries for your domains (CA/B Forum BR, 2024; Cloudflare Operational Best Practices, 2023). Monitoring should be based on Handshake Success Rate metrics, chain validation errors, and OCSP stapling timings, enabling early detection of degradations. For large domain fleets, correlation of events by CA and certificate type is useful. This makes operations predictable and reduces the risk of “nighttime disasters.”

Implementation is performed via Prometheus/Zabbix and specialized services (e.g., Cert Spotter, Keychest) with webhooks in incident management systems (testssl.sh CI, 2023; Cert Spotter Docs, 2024). In the banking infrastructure case study, the addition of OCSP checking and stapling timeouts reduced connection failures due to expired stapled responses, and CT monitoring alerted about false positives for a similar domain, allowing for timely revocation (Google CT, 2018; Cloudflare, 2023). Such redundancy at the signal source level reduces the likelihood of missed issues.

Best practices include multi-layered timeouts and OCSP caching, fallback strategies, and logging all connection attempts with revoked certificates for forensic purposes (IETF RFC 6960, 2013; PCI SSC Guidance, 2022). In an e-commerce case, combining alerts based on time, chain, OCSP/CRL, and CT provided end-to-end visibility, allowing the detection of a failure in an intermediate certificate 48 hours before a scheduled renewal. This reduces operational risks and increases resilience to external CA infrastructure failures.

When is mTLS needed and how to implement it

mTLS is a mutual TLS authentication method that verifies both client and server certificates. This approach complies with Zero Trust principles and is used for internal APIs, B2B integrations, and payment gateways where strong party authentication is required (NIST SP 800-207, 2020; NIST SP 800-52r2, 2019). Implementation requires a client certificate management infrastructure: issuance, rotation, revocation via CRL/OCSP, and protection of private keys in HSM/KMS (CA/B Forum BR, 2024; NIST SP 800-57, 2020). In the bank’s case study, mTLS with OV certificates is used for API integrations with processing, and revocation occurs via automatic OCSP/CRL updates within 5 minutes. This minimizes the time of unauthorized access in the event of a compromise.

Mistakes in mTLS implementation include hardcoding certificates, manual rotation without a centralized registry, and ignoring expiration dates. Proper implementation involves using an ACME-compliant internal CA or certificate management API, centralized inventory, short expiration dates, and automatic revocation (IETF RFC 8555, 2019; WebTrust for CAs, 2023). In Kubernetes and service meshes (Envoy/Istio), mTLS is becoming the default setting, issuing short-lived certificates to pods (Istio Security Overview, 2023). This reduces operational overhead and simplifies scaling without losing control.

Experience shows the importance of segmentation: mTLS is appropriate for internal and B2B circles of trust, while public APIs with multiple dynamic clients are best served via OAuth2/OpenID Connect with additional TLS channel protection (IETF RFC 6749, 2012; OpenID Connect Core, 2014). In hybrid scenarios, mTLS is mandated only for critical routes, while token authentication with a limited TTL and client binding is used for other routes. In the fintech platform case, mTLS is used between microservices and in B2B interconnects, while OAuth2 is used for the public API, reducing the complexity of certificate management for thousands of clients while maintaining a high level of security. This is consistent with the principles of minimization and separation of concerns.

mTLS vs. OAuth2 – Which to Choose for an API

mTLS provides transport-layer authentication and is well-suited for machine-to-machine deployments with a fixed set of trusted clients, while OAuth2 addresses application-layer authorization and is scalable for user and partner scenarios (IETF RFC 6749, 2012; IETF RFC 8705, 2020). RFC 8705 describes mTLS as a client authentication method within OAuth2, combining the strengths of both approaches: TLS-level authenticity and granular permissions. In practical design, this translates into a combination: mTLS restricts access to critical endpoints, while OAuth2 manages permissions and delegation. This approach reduces the risk of client spoofing and simplifies access revocation.

Selection criteria include client type (static/dynamic), delegation requirements, audience size, compliance requirements, and operational rotation processes. For closed B2B clients, mTLS with OV certificates is used, while for high-volume mobile/web clients, OAuth2 with private_key_jwt/MTLS client auth according to RFC 8705 for increased security (IETF RFC 8705, 2020; OpenID FAPI, 2021). The external API case for partners uses OAuth2 with JWT and additional mTLS for payment routes, which enhances authenticity assurance while maintaining authorization flexibility. This reduces the attack surface and simplifies rights auditing.

Mistakes in selection arise when attempting to implement mTLS for a public API with tens of thousands of clients: issuance/rotation/revocation management becomes a bottleneck and increases maintenance costs. In such cases, OAuth2 with strong client authentication and short-lived tokens is more practical, reserving mTLS for “golden paths” and internal communications (OpenID FAPI, 2021; NIST SP 800-63B, 2017). In the marketplace case study, migrating public integrations to OAuth2 and retaining mTLS for critical backend routes reduced operational costs and simplified client SDK releases. This confirms the importance of matching the mechanism and the threat model.

How to revoke a client certificate quickly

Rapid revocation is critical when a key is compromised or permissions are changed. CA/B Forum BR prescribes CRL and OCSP mechanisms for status publication, and NIST SP 800-57 recommends minimizing status TTL and using automation in distribution (CA/B Forum BR, 2024; NIST SP 800-57, 2020). The must-staple practice (TLS Feature) forces the server to provide a current OCSP response, reducing latency and the client’s dependence on external OCSP endpoints (IETF RFC 7633, 2015; IETF RFC 6960, 2013). In the payment system, revocation is initiated by the internal CA API; the updated CRL and OCSP response reach the load balancers within minutes, and connection attempts with a revoked certificate are immediately rejected. This reduces the risk window.

Common mistakes include infrequent CRL updates (once per day), lack of OCSP stapling, and reliance on client verification. A short OCSP response lifetime, redundant distribution points, centralized logging of attempts with revoked certificates, and forensic dashboards are recommended (CA/B Forum BR, 2024; Cloudflare OCSP Best Practices, 2023). In a B2B integration case, after reducing the OCSP TTL to 5 minutes and replicating the CRL to regional nodes, the time to complete blocking of access was reduced from hours to minutes. This aligns mTLS security with real-time risks.

To ensure resilience, it’s important to test for “black swan” scenarios such as OCSP unavailability, CRL corruption, and client incompatibility. Fallback strategies include caching the last “good” stapled response, gradually tightening the must-staple policy, and notifying certificate holders with SLAs for reissues (IETF RFC 6960, 2013; PCI SSC Guidance, 2022). This approach is supported by incident reports from providers where OCSP failures led to widespread failures with overly aggressive policies without fallback timeouts (Cloudflare, 2023). Balancing strictness and resiliency is critical to operational stability.

TLS Deployment Scenarios for Web, API, Mobile, and Payment Systems

Unified principles for different channels—TLS 1.3 as a priority, TLS 1.2 with AEAD and PFS, proper certificate validation, elimination of downgrade vectors, and the use of fortifying headers—are agreed upon in OWASP ASVS 4.0.3 and Mozilla SSL Guidelines 2024 (OWASP, 2021; Mozilla, 2024). For websites, HSTS (preload for critical domains), the absence of mixed content, chain optimization (short, full, with OCSP stapling), and adaptive cipher suite prioritization by audience are important. In the API environment, mTLS is mandated for internal communications and OAuth2/OIDC for public ones, with automated certificate rotation (RFC 8555, 2019). In cases of large sites, such practices improve the ratings of external scanners and stabilize TTFB.

For web frontends, HSTS with preload list checking, CSP for mixed content blocking, and proper redirects from HTTP to HTTPS, excluding open redirects, are being implemented (Chrome HSTS Preload, 2024; W3C Mixed Content, 2020). For APIs, consideration is given to HTTP/2 and, where supported, HTTP/3/QUIC for mobile users, which reduces sensitivity to packet loss (IETF RFC 9114/9000, 2022/2021). In payment systems, domain segregation, OV/EV certificates, wildcard rejection, and regular PCI scans are standard. The practical benefit is robust audits and predictable operation.

TLS for Mobile Clients – Cipher Selection and Optimization

Mobile networks are characterized by high RTT and variable packet loss, so minimizing handshakes and selecting ciphers based on hardware capabilities is especially important. ChaCha20-Poly1305 shows advantages on ARM devices without AES-NI, while session resumption and 0-RTT (for idempotent GETs) reduce time to first byte (IETF RFC 8439, 2018; IETF RFC 8446, 2018). Cloudflare measurements show a 5–8% reduction in TTFB with ChaCha20 priority for eligible clients (Cloudflare, 2020). In a mobile banking case study in Azerbaijan, the combination of TLS 1.3, ChaCha20 priority, and session resumption reduced login time by ~200 ms. This confirms the value of adaptive cryptographic policies.

Optimization is complemented by HTTP/2/3 with ALPN, which improves resilience to packet loss and reduces queue blocking (IETF RFC 9114, 2022; IETF RFC 7301, 2014). 0-RTT control is important: it is enabled only for GET and static data, while 1-RTT is forced for POST/PUT/DELETE, preventing the risk of reprocessing (IETF RFC 8446, 2018). Regular A/B tests across device and network segments confirm performance hypotheses and minimize regressions during TLS/HTTP library updates. This discipline ensures a consistent user experience across heterogeneous mobile environments.

TLS in Payment Integrations – Requirements and Practices

Payment carts and APIs comply with PCI DSS v4.0, which prohibits TLS 1.0/1.1, requires TLS 1.2+ with AEAD and PFS, enforces strict certificate validation, and ensures downgrade resistance (PCI SSC, 2022). HSTS and CSP are also recommended to prevent unprotected transitions and embedded resources (OWASP ASVS, 2021). At the Baku processing center, TLS 1.3 with ECDHE and AES-256-GCM, preload-HSTS, and configuration monitoring ensured the annual audit passed without any issues and no downgrade incidents (PCI SSC, 2022; Chrome HSTS Preload, 2024). This set of measures meets industry expectations and mitigates operational risks.

A common architectural mistake is the use of wildcard certificates on payment domains and the combination of external and internal zones into a single SAN certificate, which complicates revocation and auditing. It is recommended to segregate domains, use OV/EV for critical façades, automate rotation, and regularly conduct external scans (SSL Labs/testssl.sh) and CT log monitoring (Google CT, 2018; SSL Labs, 2024). In this case, eliminating wildcards and switching to separate certificates sped up revocation to minutes and simplified proof of compliance during investigations. This improves manageability and transparency.

How to combine CDN/WAF and custom certificates

Combining CDN/WAF with custom certificates requires consistent TLS profiles and synchronized expiration dates at the perimeter and origin. The practice of “custom SSL” on a CDN ensures control over the CA/chain and a unified view of the organization, while strict chain validation and OCSP stapling at the origin prevent validation errors (Cloudflare SSL for SaaS, 2023; Akamai Edge SSL, 2023). Synchronous rotation, monitoring of both edges, and handshake tests through CDN points of presence are recommended. This minimizes configuration discrepancies and false starts during renewals.

Relying on shared CDN certificates for critical domains and failing to control the provider’s TLS configuration is a mistake. It is recommended to request reports from the CDN on cipher profiles, versions, and HTTP/2/3 support, as well as conduct external checks from different geolocations (Mozilla SSL Guidelines, 2024; SSL Labs, 2024). In an e-commerce case, uploading an EV certificate to the CDN and synchronizing the timing with origin eliminated chain discrepancies and ensured consistent customer behavior. This maintains a consistent and predictable user experience across the global delivery network.

Technology

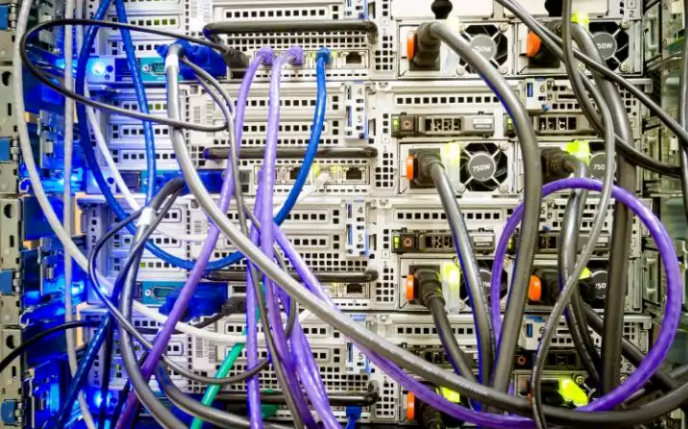

Lagos Eyes 250MW Data Centre Capacity by 2030

By Adedapo Adesanya

The Lagos State government plans to expand the city’s data centre capacity to over 250 megawatts (MW) by 2030 as part of efforts to strengthen its digital infrastructure ecosystem.

This was disclosed by the state’s Commissioner for Innovation, Science, and Technology, Mr Olatubosun Alake, at the launch of the Kasi Cloud LOS1 data centre facility in Lekki. Nigeria Sovereign Investment Authority (NSIA) invested in Kasi Cloud through an $8 million convertible loan note in 2021.

Mr Alake said Lagos already hosts nearly three-quarters of Nigeria’s commercial data centre capacity, adding that the government intends to expand its infrastructure footprint significantly over the next five years.

“There are about 146 additional megawatt data centres planned in the pipeline,” he said. “We envisage that by 2030, we would have over 250 megawatts of data centre capacity in Lagos, three times the current capacity growth.”

The expansion comes as demand for cloud services, AI computing power, and local data storage continues to grow across Nigeria’s digital economy, with Lagos at the forefront, housing thousands of businesses and startups.

Mr Alake said the Kasi Cloud facility represents Lagos’ entry into “large-scale hyperscale AI infrastructure,” signalling the state’s ambition to evolve beyond being known primarily as a startup hub into a major centre for digital infrastructure and AI computing.

“Lagos is no longer simply a startup city,” he said. “It is an infrastructure city.”

The Kasi LOS1 facility is designed as a 40MW hyperscale data centre campus, beginning operations with an initial 7.2MW IT load.

According to Mr Alake, the facility includes advanced GPU computing infrastructure powered by Nvidia H100 and H200 chips, alongside liquid cooling systems and cloud infrastructure services designed to support AI workloads.

The Lagos State government believes such infrastructure will become critical as AI adoption accelerates globally.

Mr Alake said the state is investing in fibre optic networks, smart city technologies, university innovation programmes, and digital government systems to prepare for the transition.

“The AI economy is going to require hundreds of megawatts,” he said. “The market has already made its decision about where digital infrastructure belongs.”

On his part, Mr Johnson Agbogun, co-founder and chief executive officer of Kasi Cloud, said the project was built to reduce Nigeria’s dependence on foreign cloud infrastructure and give African businesses more control over how their data and AI systems are developed.

“Nigerian enterprises are currently spending $850 million every year on foreign cloud infrastructure,” he said. “Every naira spent abroad on cloud and AI infrastructure helps build capabilities somewhere else.”

He added that the facility runs GPU-powered AI workloads from local enterprises and described the Lekki campus as “the beginning of Nigeria’s AI factory.”

“As artificial intelligence reshapes economies globally, the nations that control their own compute infrastructure and data will be the ones positioned to lead,” added Mr Kolawole Owodunni, NSIA’s Executive Director and Chief Information Officer.

Technology

Google I/O 2026: 4 Major Updates That Are Changing How Google Search Works

The goal of Google Search has always been simple: to help you ask anything on your mind. Whether it is a quick fact to help with your daily hustle or a complex question about starting a new business, Nigerians rely on Search every single day.

Over the last year, Google has rapidly reimagined what Search can do with AI. The momentum has been incredible—just one year after its debut, AI Mode has surpassed one billion monthly users globally. As people have realised just how much more Search can do for them, they are searching more than ever before, reaching an all-time high in search queries last quarter. Today at Google I/O, Google shared the next step in its journey to bring together the best of a search engine with the best of AI.

To power this next chapter, Google is officially upgrading Search with Gemini 3.5 Flash as the new default model in AI Mode for everyone worldwide. Delivering sustained frontier performance for agents and coding, Gemini 3.5 Flash is the engine driving the new era of AI-powered Search. Because curiosity doesn’t always fit into standard keywords, this powerful AI model is transforming Search from a tool that simply finds information into an intelligent platform capable of reasoning, monitoring the web, and executing complex tasks on your behalf.

Here is a look at the four biggest AI-powered announcements coming to Google Search:

1. A Completely Reimagined Search Box

Google is introducing the biggest upgrade to its Search box in over 25 years. Now completely reimagined with AI, the new intelligent Search box dynamically expands to give you the space to describe exactly what you need. It goes beyond simple autocomplete by anticipating your intent and helping you phrase your questions. You are no longer limited to typing; you can now search using text, images, files, videos, or even Chrome tabs as inputs. Additionally, Google is making it easier to ask follow-up questions directly from an AI Overview, flowing naturally into a conversational back-and-forth where your context stays with you as you explore.

2. New Search Agents That Work in the Background

We are entering the era of Search agents, where you can create and manage multiple AI agents directly in Search. Google is launching “Information agents” that operate in the background 24/7. These agents intelligently scan the web—alongside fresh data on finance, shopping, and sports—to monitor for changes related to your specific questions. For example, if you are house hunting, your agent will continuously scan the market and notify you the moment a listing matches your exact criteria. Furthermore, Search is expanding its agentic booking capabilities; you can soon share specific criteria (like a late-night private karaoke room) and Search will pull the latest pricing and links to finish booking. For certain categories, Google can even call businesses on your behalf.

3. Custom Mini-Apps and Visuals Built Just for You

Search is no longer just returning links; it is now building the ideal response in the perfect format for your query entirely on the fly. By bringing the power of Google Antigravity and the agentic coding capabilities of Gemini 3.5 Flash into Search, users will get a custom “Generative UI.” This means Search can design custom layouts, interactive visuals, tables, graphs, or simulations in real-time. But it goes a step further: if you have an ongoing task, like establishing a new health routine, Search can actually code a custom fitness tracker or mini-app for you. These custom dashboards tap into real-time sources like live maps and weather, giving you a personalised tracker you can return to again and again.

4. Expanded Personal Intelligence Without a Subscription

For AI to be truly helpful, it shouldn’t just know the world’s information—it should understand your personal context, too. To achieve this, Google is expanding Personal Intelligence in AI Mode to more people in nearly 200 countries and territories across 98 languages. Crucially, this is being rolled out with no subscription required. Users can securely connect apps like Gmail, Google Photos, and soon Google Calendar directly to Search. Designed with transparency and choice at its heart, this allows you to safely ask Search to find information buried in your own personal files, always keeping you in complete control of your connected data.

Technology

Fibre Cuts: Expert Blames Road Construction for 60% of Network Outages

By Modupe Gbadeyanka

The chief executive of Dimensions Data Limited, Mr Gbenga Olabiyi, has blamed road construction for 60 per cent of network outages caused by fibre cuts.

Speaking recently at the National Dig-Once Policy Forum, which marked the 8th Policy Implementation Assisted Forum (PIAFo), he drew attention to the gap between the infrastructure Nigeria has and what it can actually deliver if a coordinated framework is adopted.

“Nigeria currently has about 35,000 kilometres of fibre in the ground, yet only 16 per cent of Nigerians are connected to it. Broadband penetration stands at 45 per cent. Lagos alone has a penetration rate of over 70 per cent,” Mr Olabiyi said.

He emphasised that the failure to address the missing fibre link over the years has led to saturation of connectivity in urban centres, while the hinterlands are left either unconnected or poorly served.

At the same programme, convened by Mr Omobayo Azeez, stakeholders in the telecommunications sector called for the adoption of the dig-once policy to lower the costs of fibre deployment, reduce infrastructure damage, improve safety, and shorten rollout timelines.

Quoting the Nigerian Communications Commission (NCC), it was noted that of the 50,000 fibre cut incidents recorded in a year, about 30,000, which represents 60 per cent, occurred during road construction and rehabilitation.

Stakeholders thus called for a review of existing road construction and building codes to accommodate the installation of fibre conduits in the original design standard of the infrastructure planning.

“What Dig-Once offers is an opportunity to correct this,” the president of the Association of Telecommunication Companies of Nigeria, Mr Tony Emoekpere, stated.

He added that even operators frequently damage one another’s cables during repeated digging, thus increasing repair costs and service disruptions.

The Deputy Director of Strategic Business Initiatives at ipNX Nigeria Limited, Mr Segun Okuneye, said under the dig-once policy, road contractors should install ducts during construction.

He said the repeated excavation of the road leads to incessant destruction of existing infrastructure and triggers service blackouts with operators bearing additional costs of repair of replacing the fibre.

Also, the chairman of the Association of Licensed Telecom Operators of Nigeria (ALTON), Mr Gbenga Adebayo, said operators should focus not just on digging once but on eliminating unnecessary digging altogether by sharing existing infrastructure and jointly replacing legacy cables.

“Early fibres laid 15 to 20 years ago are now ageing, and the industry needs a plan to replace them without everyone digging the same routes again,” he said.

-

Feature/OPED6 years ago

Feature/OPED6 years agoDavos was Different this year

-

Travel/Tourism10 years ago

Lagos Seals Western Lodge Hotel In Ikorodu

-

Showbiz3 years ago

Showbiz3 years agoEstranged Lover Releases Videos of Empress Njamah Bathing

-

Banking8 years ago

Banking8 years agoSort Codes of GTBank Branches in Nigeria

-

Economy3 years ago

Economy3 years agoSubsidy Removal: CNG at N130 Per Litre Cheaper Than Petrol—IPMAN

-

Banking3 years ago

Banking3 years agoSort Codes of UBA Branches in Nigeria

-

Banking3 years ago

Banking3 years agoFirst Bank Announces Planned Downtime

-

Sports3 years ago

Sports3 years agoHighest Paid Nigerian Footballer – How Much Do Nigerian Footballers Earn