Media OutReach

IMDA Refreshes Skills Framework for Media and Continues to Support Virtual Production Capabilities and Training

– New company-led apprenticeship programme with media companies to expand job and training opportunities in the industry

– 28 Virtual Production projects supported, and 650 media professionals trained to date under the Virtual Production Innovation fund

SINGAPORE – Media OutReach Newswire – 4 December 2024 – The Infocomm Media Development Authority (IMDA) has worked closely with the media industry to introduce a refresh to the Skills Framework for Media, which will provide up-to-date sector information, job roles and existing and emerging skills for media practitioner, in new tech areas such as Virtual Production (VP) and Generative Artificial Intelligence (GenAI). To provide locals with more job and training opportunities in new tech areas, another new initiative is the company-led apprenticeship programme with media companies.

2. IMDA has been advancing VP applications in Singapore’s media industry since 2023. To date, there have been 28 VP content projects, and 650 media professionals trained in VP through these content projects and workshops, supported by the S$30 million VP Innovation Fund announced last year. These updates were made by Singapore’s Senior Minister of State for Digital Development and Information (MDDI) & Ministry of National Development (MND), Mr Tan Kiat How at the opening of the Asia TV Forum and Market (ATF) today, an event of the Singapore Media Festival (SMF). Hosted by IMDA, the SMF celebrates its 11th edition with the theme “Make It Here“, inspiring the region’s media community to create, connect, and realise their visions.

Refreshed Guide for the Future of Media Careers

3. As Singapore’s media market expands, employment opportunities are also on the rise. There were 24,960 media professionals employed across the economy in 2023, reflecting a compound annual growth rate of 7% since 2018[1]. The refreshed Skills Framework for Media provides a comprehensive roadmap for these media professionals, charting the future of media careers and talent development. This framework was developed by IMDA in partnership with SkillsFuture Singapore (SSG), industry associations like the Singapore Association of Motion Picture Professionals, Institutions of Higher Learning (IHLs), after extensive consultation with around 150 media representatives across industry, training providers, IHLs and freelancers. This ensures the framework meets the needs of a dynamic media landscape.

4. The refreshed Skills Framework identifies 195 job roles across 9 tracks, with 230 technical skills and competencies in existing and emerging skills in Media like VP, GenAI, content production, production technical services, and more. Media practitioners can use the Skills Framework to upskill and remain relevant in today’s media landscape, while employers and training providers can tap on it to structure learning and training opportunities. The framework was first launched in 2018 jointly by IMDA, SSG, Workforce Singapore (WSG) and in consultation with Singapore’s media industry.

5. IMDA will also offer more company-led on-the-job training opportunities through apprenticeships with media companies in line with the new skills added into the refreshed framework including VP. This is a new initiative and, as a start, IMDA will partner seven media companies to offer over 70 apprenticeship opportunities across content production, business management and technical roles that will further deepen practical skills development and ensuring talent is industry ready.

6. In his opening speech, SMS Tan Kiat How said, “The Asia TV Forum and Market and the Singapore Media Festival are platforms for networking and collaborations. As Asia’s entertainment content industry grows, Singapore will be your partner to tell our stories to the world, and for the world to find discover the talents and gems in Asia. Today, we are taking an important step to do so by investing in the future of media – our media professionals so that they are equipped with the right skills, technology, and platforms to excel in this dynamic industry.”

New Virtual Production Projects and Talent Supported

7. The use of VP in Singapore’s media industry continues to progress with the launch of three full-scale VP studios that can support international projects developed with VP technology. These are Aux Infinite Studios, Oceanus Media Global and X3D Studio and they are also providing VP training. Next year, media professionals can look forward to specialised training opportunities for job roles such as VP supervisors from local and overseas VP experts.

8. There have also been 28 VP content projects which leveraged VP technology to open creative possibilities and overcome physical limitations. For example, film director Ian Wee from Reelisations Pte Ltd tapped on VP in his latest content project “Time Apart”, to execute challenging time lapse sequences across different time periods. Ian was a participant of the National Film and Television School (NFTS) Certificate in Virtual Production course in April 2023. Another example, Glenn Chan from Sonder Films used 3D scanning technology to develop and integrate 3D CGI characters into virtual environments for his short-form VP project “The Old World”. Glenn was a participant of the Aux-XON SG x Korea VP Masterclass conducted earlier this year.

9. For more details on the Singapore Media Festival and the Asia TV Forum and Market please visit www.imda.gov.sg/smf. To read the latest Skills Framework for Media, visit https://www.imda.gov.sg/how-we-can-help/media-manpower-plan/skills-framework-for-media-sfw-for-media.

Hashtag: #SGMediaFest

![]() https://www.imda.gov.sg/smf

https://www.imda.gov.sg/smf![]() https://www.linkedin.com/company/imdasg

https://www.linkedin.com/company/imdasg![]() https://www.instagram.com/imdasg

https://www.instagram.com/imdasg

The issuer is solely responsible for the content of this announcement.

About the Singapore Media Festival

The Singapore Media Festival, hosted by the Infocomm Media Development Authority (IMDA), proudly returns for its 11th edition as one of Asia’s premier international media industry platforms. From 28 November to 8 December 2024, Singapore will be the focal point for Asia’s media community, showcasing diverse media innovations, forging industry deals, and presenting Singapore’s world-class content. This year’s festival, themed “Make It Here,” aims to inspire the region’s media talent to create, connect, and realise their visions. The event will bring together media professionals, industry leaders, creators, and consumers through the Singapore International Film Festival (SGIFF), Asia TV Forum & Market (ATF), Singapore Comic Con (SGCC), and Nas Summit Asia (NAS).

For more information, please visit: ![]() www.imda.gov.sg/smf.

www.imda.gov.sg/smf.

About Asia TV Forum & Market (ATF) 2024

3 December 2024: The ATF Leaders Dialogue

4 – 6 December 2024: Market & Conference

Into its 25th edition, ![]() Asia TV Forum & Market (ATF) – the region’s co-production & entertainment content market and conference – is the proven industry platform to acquire knowledge, network, buy, sell, finance, distribute and co-produce across all platforms. It is the premier stage in Asia to engage with the entertainment industry’s top players from around the world. It’s where the best minds meet, and the future of Asia’s content is shaped.

Asia TV Forum & Market (ATF) – the region’s co-production & entertainment content market and conference – is the proven industry platform to acquire knowledge, network, buy, sell, finance, distribute and co-produce across all platforms. It is the premier stage in Asia to engage with the entertainment industry’s top players from around the world. It’s where the best minds meet, and the future of Asia’s content is shaped.

For more information, please visit www.asiatvforum.com

About Infocomm Media Development Authority

The Infocomm Media Development Authority (IMDA) leads Singapore’s digital transformation by developing a vibrant digital economy and an inclusive digital society. As Architects of Singapore’s Digital Future, we foster growth in Infocomm Technology and Media sectors in concert with progressive regulations, harnessing frontier technologies, and developing local talent and digital infrastructure ecosystems to establish Singapore as a digital metropolis.

For more news and information, visit ![]() www.imda.gov.sg or follow IMDA on LinkedIn (IMDAsg) and Instagram (@imdasg).

www.imda.gov.sg or follow IMDA on LinkedIn (IMDAsg) and Instagram (@imdasg).

![]()

Media OutReach

ICONSIAM’s ‘THAICONIC SONGKRAN CELEBRATION 2026’ to Immerse Bangkok with Thailand’s Most Spectacular Water Festival

A UNESCO-Recognized Festival Reimagined Through Immersive Cultural Experiences, Iconic Entertainment, and Thailand’s Most Breathtaking Chao Phraya Riverfront Setting from April 10–15, 2026

BANGKOK, THAILAND –

A Global Celebration Driving Thailand’s Festival Economy

Mr. Supoj Chaiwatsirikul, Managing Director of ICONSIAM Co., Ltd., stated, “Songkran remains one of the most significant drivers of Thailand’s festival economy, particularly across the tourism, retail, and hospitality sectors. According to the Tourism Authority of Thailand, this year’s Songkran period is projected to generate over 30.35 billion baht in domestic economic circulation—an increase of 6% from the previous year, with approximately 500,000 international visitors expected to travel to Thailand, reflecting a 4% year-on-year growth. These figures underscore Songkran’s growing status as a truly global festival and one of Thailand’s most powerful attractions for international travelers.

As a leader in creating iconic, world-class experiences, ICONSIAM continues to elevate ‘ICONSIAM THAICONIC SONGKRAN 2026′ as a landmark celebration that showcases the rare and enduring beauty of Thai cultural traditions. Under the concept of ‘Splashing Fun, Joyful Celebrations, and Auspicious Thai New Year,’ the event brings together the very best of Thailand into one extraordinary riverside experience along the Chao Phraya River. More than a celebration, this festival is a powerful expression of national pride and cultural identity, reinforcing ICONSIAM’s position as a Global Experiential Destination. We are confident that this year’s event will further strengthen Bangkok’s reputation as one of the world’s most compelling cultural destinations, while driving tourism growth and stimulating economic momentum throughout the second quarter of the year.”

Highlights of the “4 THAICONIC Experiences – The Ultimate Songkran Journey”

- THAICONIC WATER FESTIVAL – The Ultimate Riverside Water

ExperienceExperience Songkran like never before with an extraordinary water playground inspired by Thailand’s beloved national symbol, the elephant. At its heart stands a spectacular 9-meter-tall water-spraying installation created in collaboration with Dee SweetDrug Studio. The vibrant space also features a dedicated Kids Zone for family-friendly fun, alongside solar-powered drying zones —reflecting ICONSIAM’s commitment to sustainability.

- THAICONIC ENTERTAINMENT – A Festival of Modern Icons

Immerse in an electrifying lineup of Thailand’s top artists and rising stars through dynamic mini concerts and live performances throughout the six-day celebration. Artists include Tle–Firstone, TeeTee–Por, Auau-Save, Daou–Offroad, KT Kratae, New Country, Sornram Namphet, and more.

- THAICONIC HERITAGE – A Living Showcase of Thai Identity

Witness a magnificent Songkran parade that brings Thailand’s cultural legacy to life through elaborately designed floats inspired by historical eras. For the first time, cultural icons LingOrm, a popular Thai actress duo, and 4EVE, Thailand’s leading T-pop girl group, embody the Songkran Goddess “Rakshasadevi,” reinterpreting Thai tradition through a contemporary global lens. Visitors can further explore authentic Thai culture through traditional performances and regional culinary delights at SOOKSIAM, alongside over 170 dining options across ICONSIAM.

- THAICONIC BLESSED BEGINNINGS – A Sacred Start to the Thai New Year

Embrace the spiritual essence of Songkran through a revered Buddha bathing ritual at River Park. Enhanced with holy water from nine esteemed temples across Thailand, this sacred ceremony symbolizes prosperity, renewal, and auspicious beginnings for the year ahead.

A Must-Visit Songkran Destination in Bangkok

Seamlessly blending cultural authenticity, rich traditions, and large-scale entertainment, ICONSIAM continues to redefine Songkran as a truly global celebration.

Join us at “ICONSIAM THAICONIC SONGKRAN CELEBRATION 2026,” where Thailand’s most cherished tradition is brought to life in an unforgettable riverside experience of water, culture, and joy—set against the iconic Chao Phraya River from April 10–15, 2026, with free entry for all visitors. As part of the celebration, international visitors dressed in traditional Thai attire will receive a 300-baht gift card, adding a special touch to their cultural experience.

For more information, please call 1338 or visit Facebook: ICONSIAM.

Hashtag: #ICONSIAMSongkran #ICONSIAM #SongkranFestival2026 #GlobalFestival #Thailand

The issuer is solely responsible for the content of this announcement.

Media OutReach

Money20/20 Asia Elevates Its 2026 Agenda with the Launch of The Intersection Stage, Featuring the Industry’s Most Influential Voices

Industry Leaders, Regulators, and Innovators to Convene in Bangkok at the Intersection Where Digital Assets and Traditional Banking Enter a New Era of Collaboration

BANGKOK, THAILAND – Media OutReach Newswire – 3 April 2026 – the world’s leading fintech show and the place where money does business, today announced the introduction of The Intersection Stage at Money20/20 Asia happening on April 21-23 at the Queen Sirikit National Convention Center in Bangkok bringing together the region’s most powerful voices across banking, payments, digital assets, and financial innovation.

This year’s theme, “From Infrastructure to Impact – Where Technology Meets Humanity,” underscores how the Intersection Stage will explore the real-world outcomes of Traditional Finance and Decentralized Finance convergence across APAC, addressing how banks, fintechs, and emerging technologies are reshaping the global financial ecosystem. The stage brings together leaders from major financial institutions and well-known fintech companies to discuss how innovation, regulation, and new financial infrastructure are transforming areas such as digital assets, trust and cybersecurity, and cross-border payments. [1]

Siva Kumar, APAC Legal Director, Sumsub, said, “The convergence of TradFi and DeFi can only succeed if trust, identity, and compliance evolve alongside technology. Asia is leading this shift by adopting regulatory models that enable innovation without compromising security. At Sumsub, we’re witnessing institutions accelerate digital identity and verification standards at unprecedented speed. The Intersection Stage brings these critical stakeholders together to turn regulatory progress into real‑world impact.”

Speakers include Siddharth Gupta of Bank of America, Dhiraj Bajaj of Standard Chartered Bank, Fangfang Jiang of the International Finance Corporation, Ran Goldi, SVP Payments & Network, Fireblocks and Kaushik Sthankiya of Kraken, who will share insights on regulatory innovation, digital asset adoption, developments in stablecoin, tokenization, blockchain‑enabled settlement, and how new payment rails are enabling faster and more efficient cross-border transactions.

For decades, Traditional Finance aimed to protect the system while Decentralized Finance wanted to reinvent it. Today, these two worlds are converging where digital money moves faster than ever, said Danny Levy, EVP & Managing Director for APAC & the Middle East at Money20/20. The Intersection Stage brings together the regulators and innovators driving the frameworks that will guide the next decade of global finance.”

The broader 2026 program also features keynote speakers such as Joseph Chan, Under Secretary for Financial Services and the Treasury of the Government of Hong Kong SAR; Shahril Azuar Jimin, Group Chief Sustainability Officer at Maybank; and Sunita Kannan, Global Head of AI Product & Strategy at Microsoft, underscoring the calibre of leadership shaping the future of finance across the region.

From Asia’s pioneering regulatory sandboxes and CBDC initiatives to the Genius Act in the US to MiCA in Europe. Regulated institutions like AMINA Bank are at the forefront of this transformation, particularly in navigating the evolving regulatory landscape across key markets.

Cora Ang, Head of Legal & Compliance APAC, AMINA Bank said, “Asia is demonstrating what responsible innovation truly looks like. As digital assets, tokenization, and new payment rails gain momentum, strong legal and compliance frameworks are essential to scaling them safely. At AMINA Bank, we see the region embracing this balance with clarity and ambition. The Intersection Stage at Money20/20 Asia is the perfect forum to advance these conversations and align the industry on what the next generation of financial infrastructure should be.”

Key Sessions on The Intersection Stage

- Day 1: Tuesday 21 April, at 15:40 – Banking on Digital Transformation 101

By Barbaros Uygun, Chief Executive Officer, Mox Bank Limited, Jessica Lam, Group Chief Strategy Officer, WeLab, Vivien Tan, Senior Vice President, Alliance Bank Malaysia, Andy Wu, General Manager, Hong Kong, Yusys Technologies, Rupa Ramamurthy, Senior EVP, Banking Operations, TP

- Day 1: Tuesday, 21 April 2026 at 12:00 – Building the Golden Record for Tokenised Asset Markets

By Etelka Bogardi, Partner, Reed Smith Singapore, Aaron Gwak, CEO & Founder, Libeara, Alvin Chia, Head of Digital Assets Innovation APAC, Northern Trust, moderated by Tanzeel Akhtar, Journalist, Morley Sterling LLC

- Day 2: Wednesday, 22 April 2026 at 10:00 – The Rise of Blockchain and Stablecoin Payment Rails

By Tran Hung, CEO, Uquid, Paul Veradittakit, Managing Partner, Pantera Capital, Maggie Wu, Co-Founder & CEO, VelaFi, Facilitated by Amanda Pecanha, Chief Compliance Officer, Trace Finance

- Day 2: Wednesday 22 April, 15:45 – How Digital Asset Ecosystems Will Redefine Money

By Dhiraj Bajaj, Global Head of FI, Transaction Banking, Standard Chartered Bank, Julia Zhou, Chief Operating Officer, Caladan, Giorgia Pellizzari, Chief Product Officer & Head of Custody, Hex Trust, Dr. Karin Boonlertvanich, Executive Vice President, KASIKORNBANK & Chairperson of the Board, Orbix Group

- Day 3: Thursday, 23 April 2026 at 10:25 – Money’s Next Evolution, Stablecoins, CBDCs and the New Payment Stack

By Rahul Advani, Global Co-Head of Policy, Ripple, Lissele Pratt, Founder, Capitalixe, Bhau Kotecha, Co-Founder, Paxos Labs, Maria Oldham, COO, Yellow Card, moderated by David Birch, Global Ambassador, Consult Hyperion

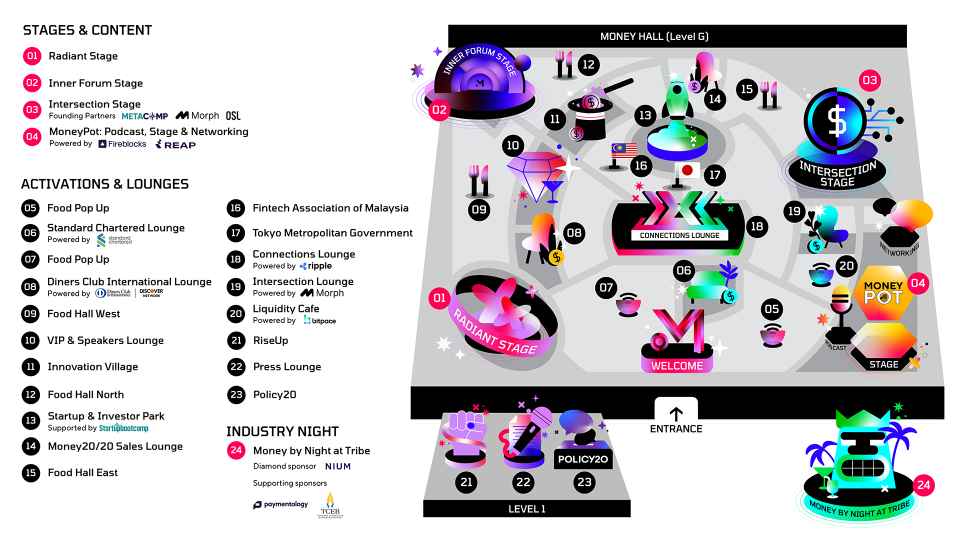

Alongside the Intersection Stage, Money20/20 Asia will feature three additional stages: The Radiant Stage, uniting Asia’s most influential industry voices; The Inner Forum Stage for deep‑dive sessions and workshops; and The MoneyPot Stage that also includesfor live podcasting and networkingexperiences, creating a comprehensive ecosystem for learning, debate, and collaboration.

The show brings together leaders from more than 120 banks and the world’s largest payment providers, including Standard Chartered, Bank of America, Citi, Deutsche Bank, Maybank, and J.P. Morgan to name a few. Experts from leading payment providers including Visa, Nium, Thunes, Mastercard, Razorpay, PayPal, and Fiserv will discuss the evolution of payments across the region.

The show will also host the Startup & Investor Park, where 20 standout APAC startups will connect with global investors, enterprise partners, and decision‑makers, and compete for the Golden Ticket to the 2026 Startupbootcamp Sustainability Singapore Accelerator. [2]

Attending media can register for a press pass: HERE and the full agenda HERE.Hashtag: #Money20/20

The issuer is solely responsible for the content of this announcement.

Media OutReach

Sanya, China Deepens Tourism Ties with Malaysia - Exclusive Benefits Launched for Malaysian Tourists, Ushering in a Tropical Island Getaway at a Moment’s Notice

In addition, the Sanya Tourism Development Bureau has entered into cooperative exchanges with the Malaysian Association of Tour and Travel Agents (MATTA) and the Malaysian Budget Hotel Association (MBHA) to strengthen synergy in tourism management, product development, and visitor exchange. The newly established Sanya Tourism Overseas Promotion (Malaysia) Liaison Office will serve as a one-stop information and booking platform for Malaysian travelers, reinforcing Sanya’s position as a preferred gateway to China.

Seamless Entry, Fully Upgraded Travel Convenience

Visa-free access with zero barriers

Under China’s visa-free policy for Hainan, Malaysian citizens may enter with only a passport and stay for up to 15 days—no prior visa, financial proof, or invitation letter is required, allowing for truly spontaneous getaways.

Faster flights, greater efficiency

Direct flights from Kuala Lumpur to Sanya take just about three hours, with high occupancy rates during peak seasons reflecting strong demand. Plans to launch direct flights from Penang and Kota Kinabalu to Sanya are under discussion, promising greater accessibility and cost-effective travel options.

Streamlined clearance procedures

Efficiency is prioritized with paperless self-declaration at both Phoenix Cruise Port and Sanya Phoenix International Airport, significantly reducing clearance time. International arrival halls support payments via all major international credit cards, ensuring seamless transactions.

Comprehensive multilingual support

Multilingual support is in place across Sanya—from bilingual signage at attractions and hotels to a dedicated foreign-language service line via the 12345 hotline. The multilingual official website visitsanya.com provides comprehensive information, guaranteeing smooth communication for Malaysian tourists.

Exceptional Value: Exclusive Promotions for Malaysian Visitors

To offer a more value-packed holiday experience, Sanya has prepared tailored benefits and significant discounts, from customized packages to special Asian Beach Games offers, demonstrating its warm hospitality.

The newly established Sanya Tourism Overseas Promotion Liaison Office, in collaboration with the Malaysian Budget Hotel Association and the Malaysian Association of Tour and Travel Agents, will launch specially curated travel packages that align with local preferences.

On Trip.com, the “Travel with the Asian Beach Games” promotion features discounted Sanya tourism products, covering hotels, attractions, and holiday packages, with exclusive offers for the Malaysian market.

The 2026 Asian Beach Games bring additional surprises: popular attractions like Wuzhizhou Island and Atlantis Sanya offer tickets at up to 73% off . Hotel rooms across categories—family, couples, and resort suites—enjoy discounts of up to RMB 5,899. Local delicacies and international cuisines are available at discounts of around 48% , promising a delightful culinary journey.

Moreover, 118,000 Asian Beach Games tickets are now on sale globally. With just a few clicks online, visitors can secure their seats to experience the excitement of the 6th Asian Beach Games up close.

Diverse Experiences for Every Traveler

Sanya offers far more than just sun and sand. Whether traveling with family, as a couple, or with friends, everyone can find their ideal way to enjoy the destination.

For families :

Atlantis Sanya Water Park, ranked among the “2026 Global Top 20 Water Parks” and tied for seventh place worldwide with Universal Orlando’s Volcano Bay, offers endless aquatic fun for children, while parents can explore the adjacent world-class duty-free complex for shopping and leisure.

For Adventure Seekers:

Wuzhizhou Island, known as “China’s Premier Diving Base,” features crystal-clear waters with visibility up to 27 meters and abundant coral reefs. A variety of water sports, including diving, parasailing, and jet skiing, deliver an adrenaline-filled coastal experience.

For Culture and Wellness:

The 108-meter-tall Guanyin statue at Nanshan and immersive Li and Miao ethnic cultural experiences provide a harmonious blend of spiritual reflection and cultural discovery.

For Luxury and Retail: Sanya’s world-class duty-free complex brings together international brands, art exhibitions, and immersive experiences, creating a high-value shopping and lifestyle destination.

Sanya’s commitment to visitor convenience, diverse offerings, and tailored benefits continues to strengthen its appeal among Malaysian travelers. Since the beginning of this year, Hainan has welcomed a remarkable 204% increase in Malaysian tourist arrivals, making Malaysia one of the fastest-growing Southeast Asian source markets for Sanya.From visa-free access and direct flights to enhanced services and multifaceted experiences, Sanya is positioning itself as a leading gateway for Malaysian tourists to China.

As the Hainan Free Trade Port continues to develop, tourism cooperation between Sanya and Malaysia is poised to deepen, evolving from one-way attraction to mutual engagement.For those planning their first trip to China, Sanya offers an ideal starting point—where azure seas, golden shores, modern amenities, and heartfelt hospitality come together to create the perfect tropical escape.

Hashtag: #SanyaTourismDevelopmentBureau

The issuer is solely responsible for the content of this announcement.

-

Feature/OPED6 years ago

Feature/OPED6 years agoDavos was Different this year

-

Travel/Tourism10 years ago

Lagos Seals Western Lodge Hotel In Ikorodu

-

Showbiz3 years ago

Showbiz3 years agoEstranged Lover Releases Videos of Empress Njamah Bathing

-

Banking8 years ago

Banking8 years agoSort Codes of GTBank Branches in Nigeria

-

Economy3 years ago

Economy3 years agoSubsidy Removal: CNG at N130 Per Litre Cheaper Than Petrol—IPMAN

-

Banking3 years ago

Banking3 years agoSort Codes of UBA Branches in Nigeria

-

Banking3 years ago

Banking3 years agoFirst Bank Announces Planned Downtime

-

Sports3 years ago

Sports3 years agoHighest Paid Nigerian Footballer – How Much Do Nigerian Footballers Earn