Technology

Experts, Regulators Warn on Rise of AI Voice Cloning Scam

By Adedapo Adesanya

Experts and regulators have warned that Artificial Intelligence (AI) scams using voice cloning are the new frontier for fraudsters targeting consumers.

According to the Southern African Fraud Prevention Service (SAFPS), impersonation attacks increased by 264 per cent for the first five months of the year compared to 2021.

According to iiDENTIFii, a remote biometric digital authentication and automated onboarding technology platform, the format involves receiving a call, email or SMS from the authorities urgently requesting payment.

“The details of the request are clear and professional and include personal information unique to you, so there is no reason to doubt it. This scam is fairly common, and the majority of consumers are on the lookout for it,” it noted.

“Now imagine receiving a call from a loved one and hearing their unmistakable voice on the other end of the line saying that they need money or your account information right away. This may sound like a fraud lifted straight out of science fiction, but – with the exponential development of AI tools – it is a growing reality,” it added in a statement.

Mr Gur Geva, founder and CEO of iiDENTIFii said the threat of voiceprint has become easier since it has become cheaper and more accessible.

“The technology required to impersonate an individual has become cheaper, easier to use and more accessible. This means that it is simpler than ever before for a criminal to assume one aspect of a person’s identity.”

“Historically, voice has been seen as an intimate and infallible part of a person’s identity. For that reason, many businesses and financial institutions used it as a part of their identity verification toolbox,” he explained.

This also raised worry among regulators in the United States; the Federal Trade Commission (FTC) last week issued an alert urging consumers to be vigilant for calls in which scammers sound exactly like their loved ones.

“All a criminal needs is a short audio clip of a family member’s voice – often scraped from social media – and a voice cloning program to stage an attack,” it warned.

iiDENTIFii warned that the potential of this technology is vast. Microsoft, for example, has recently piloted an AI tool that, with a short sample of a person’s voice, can generate audio in a wide range of different languages.

“While this has not been released for public use, it does illustrate how voice can be manipulated as a medium.”

Audio recognition technology has been an attractive security solution for financial services companies across the globe, with voice-based accounting enabling customers to deliver account instructions via voice command.

Voice biometrics offers real-time authentication, which replaces the need for security questions or even PINs, with companies like Barclays and Visa adopting voice-based authentication platforms for e-commerce.

“As voice-cloning becomes a viable threat, financial institutions need to be aware of the possibility of widespread fraud in voice-based interfaces. For example, a scammer could clone a consumer’s voice and transact on their behalf,” Mr Geva warned.

The rise of voice-cloning, according to the expert, illustrates the importance of sophisticated and multi-layered biometric authentication processes.

“Our experience, research and global insight at iiDENTIFii has led us to create a remote biometric digital verification technology that can authenticate a person in under 30 seconds, but more importantly, it triangulates the person’s identity, with their verified documentation and their liveness.

“While identity theft is growing in scale and sophistication, the tools we have at our disposal to prevent fraud are intelligent, scalable and up to the challenge,” he concluded.

Technology

PIAFo Leads Urgent Push for National Dig-Once Policy

Key players across Nigeria’s digital economy, telecommunications, and infrastructure ecosystem are set for the National Dig-Once Policy Forum to champion a new course towards increasing Nigeria’s digital backbone network to 125,000km of fibre-optic infrastructure.

The event, which marks the 8th edition of Policy Implementation Assisted Forum (PIAFo), is a high-level industry dialogue aimed at accelerating the formulation and adoption of a National Dig-Once Policy as a critical enabler of safe, coordinated and cost-effective fibre infrastructure deployment in the country.

The forum, themed Accelerating Nigeria’s Digital Backbone: Dig Once Policy, Project BRIDGE and Strategies for Effective Fibre Deployment, is slated for Thursday, April 16, 2026, at Radisson Blu Hotel, Ikeja GRA, Lagos.

According to the organisers, Business Metrics Limited (BML), the introduction of the $2 billion Project BRIDGE initiative by the Federal Government to expand fibre infrastructure by an additional 90,000km from 35,000km to 125,000km by 2030 requires some new measures to ensure the successful implementation of the ambitious target and avoid mistakes of the past.

Industry stakeholders have identified that the success of a national connectivity backbone rollout depends largely on institutionalising a Dig Once Policy framework, which encourages the installation of fibre ducts and conduits whenever roads, railways, and other major public infrastructure are being constructed or rehabilitated.

According to industry data shared by the Nigerian Communications Commission, lack of such a framework is taking a toll on the telecoms sector and broadband drive as operators recorded over 50,000 fibre cut incidents across the country in 2024, with more than 60 per cent occurring during road construction and rehabilitation activities. These disruptions have resulted in billions of naira in repair costs, network outages, and service degradation.

Telecom operators in Lagos State alone said they spent over N5 billion in 2024 to repair and replace damaged fibre infrastructure in the state, while lamenting that the development continues to slow down network upgrade and expansion drive.

Beyond infrastructure damage, telecom operators also face challenges such as high Right of Way (RoW) charges, uncoordinated civil works, and repeated excavation of roads for fibre deployment.

PIAFo 8.0 aims to address these challenges by fostering collaboration among stakeholders responsible for planning, financing, constructing, and maintaining Nigeria’s digital infrastructure.

Specifically, the forum seeks to align federal, state, and local infrastructure planning around a unified Dig-Once framework; strengthen collaboration between telecom operators, infrastructure companies, and public works authorities; translate policy intentions into actionable guidelines and implementation timelines; and build stakeholder support for Project BRIDGE and complementary national fibre initiatives.

Speaking about the event, Team Lead at Business Metrics Limited, Omobayo Azeez, said Nigeria is being denied access to the robust connectivity it should derive from up to eight high-capacity undersea cable networks landed on its shores because of difficulties around terrestrial fibre infrastructure expansion.

“The Project BRIDGE initiative should excite everyone because of its ambitious targets. But for those who understand the operating terrain and why it took the industry over 20 years to achieve around 35,000km of fibre network that the country currently operates for broadband connectivity, the project calls for a major shift in execution approach with the adoption of a National Dig-Once Policy as the starting point.

“PIAFo, now in its 8th edition, is again serving as the viable platform for representatives from government ministries and agencies, senior telecom executives, infrastructure companies, data centre operators, equipment manufacturers, state governments, and industry associations to chart the way forward.”

The forum will feature keynote addresses, expert panel discussions, and strategic networking sessions designed to drive pragmatic outcomes that will accelerate Nigeria’s journey toward a resilient and inclusive digital economy.

Technology

Nigeria, Finland Strengthen Ties on Digital Economy

By Adedapo Adesanya

The Nigerian government and the Republic of Finland have formalised a strategic partnership on digitalisation and innovation, signing a Memorandum of Understanding (MoU) aimed at expanding economic activities and strengthening cooperation in the digital sector.

The agreement was signed in Abuja by the Minister of Communications, Innovation and Digital Economy, Mr Bosun Tijani, and Mr Jarno Syrjälä, Under‑Secretary of State (International Trade) at Finland’s Ministry for Foreign Affairs.

According to a statement from the Special Assistant on Media and Communications to the communications minister, Mr Isime Esene, the MoU will establish a framework for collaboration across key areas, including digital government, emerging technologies, digital public infrastructure, cybersecurity, innovation ecosystems, and capacity building.

Mr Tijani described the signing as “an important step in strengthening the partnership between both countries as we work to build a more inclusive, innovation-driven digital economy.”

“This agreement is a significant next step following our engagements in Helsinki in February, where we met with key stakeholders, including Finnvera and Finnfund, and held productive discussions on advancing collaboration around digital infrastructure, the Data Exchange Platform, and opportunities for Finnish participation in Project Bridge.”

The Minister emphasised that the partnership would “unlock meaningful opportunities for both countries, enabling us to leverage digital transformation as a catalyst for sustainable growth and shared prosperity.”

Echoing this optimism, Mr Syrjälä said: “Finland is very pleased to deepen its partnership with Nigeria in building resilient, secure, and human‑centric digital societies. Digitalisation is at its best when it empowers people, strengthens trust, and creates new opportunities for innovation.”

“Nigeria is a key partner for Finland in Africa, and this MoU provides a strong basis for concrete cooperation between our governments, institutions, and private sectors. Together, we can advance digital solutions that are interoperable, future‑fit, and beneficial to both our nations,” he added.

Technology

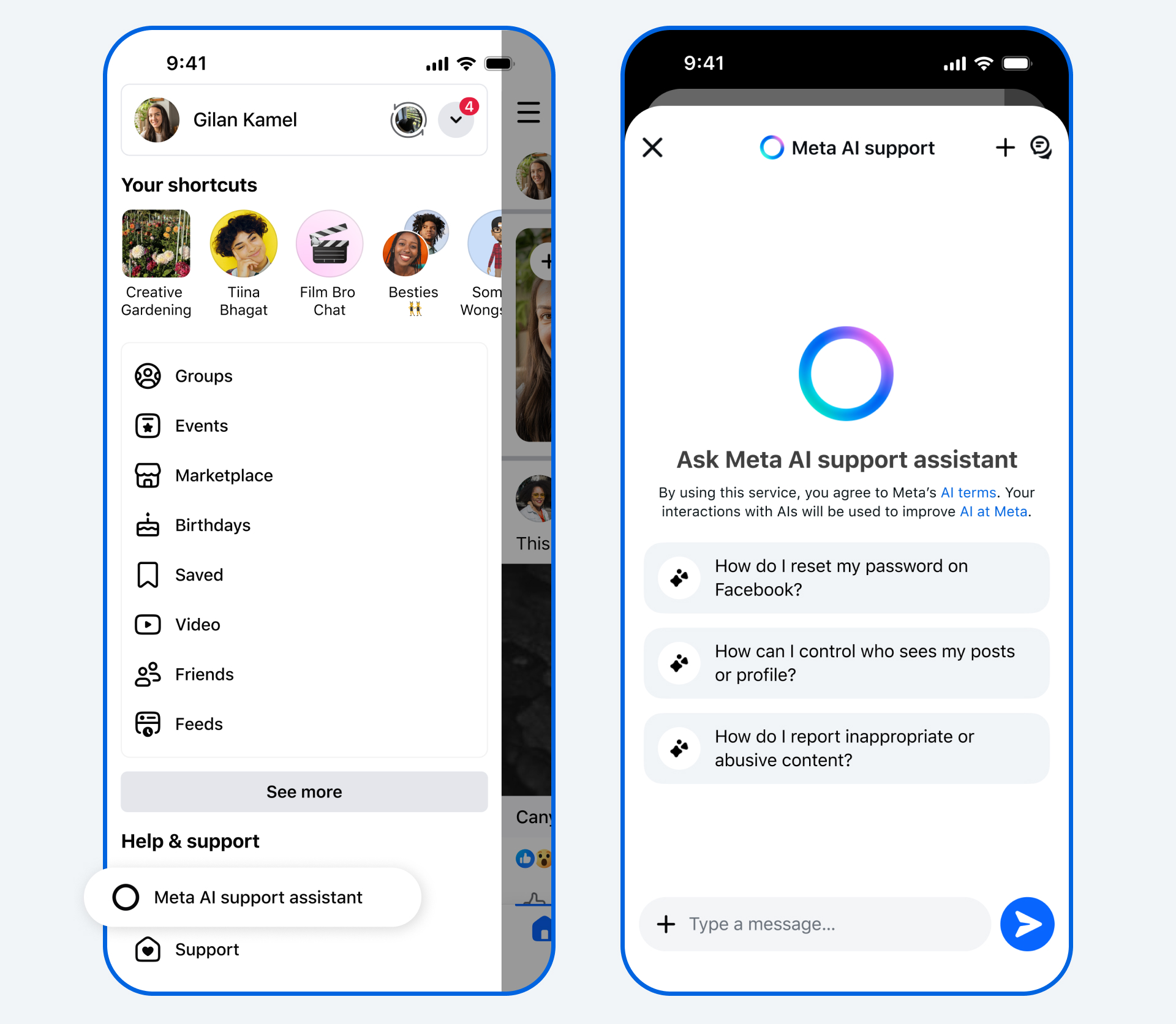

Meta Launches AI Support Assistant on Facebook, Instagram

By Aduragbemi Omiyale

New Artificial Intelligence (AI) tools designed to provide support for users of its applications have been launched by Meta.

The AI Support Assistant will work on the Facebook and Instagram apps, the company said in a statement.

The tools will help users to receive reliable and action-oriented assistance when needed.

In December, the Meta AI support assistant, a tool designed to provide reliable, 24/7 support for nearly any support issue at any time, was previewed.

Now, Meta is rolling it out globally on the Facebook and Instagram apps for iOS and Android, and within Help Centre on Facebook and Instagram on desktop, with even more capabilities and ways to help.

The new Meta AI support assistant is designed to help resolve account problems from start to finish. It offers answers for any question, like notification settings or new features, and can also take action for users on a growing set of requests directly within Facebook and, in the future, on Instagram.

The feature can report scams, impersonation accounts, or problematic content, make it easier to see why content was taken down, provide appeal options, track what happens next, manage privacy settings, reset passwords, and update profile settings.

The Meta AI support assistant can respond to requests typically in under five seconds, dramatically reducing wait times compared to traditional help centre searches or seeking answers on external websites.

“The Meta AI support assistant is a major step in our work to deliver stronger support on our apps. In fact, among people who have provided feedback, the majority report a positive experience with the Meta AI support assistant. It’s rolling out now in all languages supported by Facebook and Instagram for support topics.

“We’re continuing to invest in AI- powered tools to make support more accessible, reliable, and effective — and we’ll keep evolving the Meta AI support assistant as more people use it and as the technology advances, so it continues to improve over time,” the organisation disclosed.

Meta has also deployed AI to improve content enforcement to help users reduce the chance that scammers trick people into giving away their login details, ultimately finding and mitigating 5,000 scam attempts per day that no existing review team had caught before.

Meta said over the next few years, it would be deploying these more advanced AI systems across its apps once they consistently perform better than its current methods of content enforcement, transforming its approach.

“As we do this, we’ll reduce our reliance on third-party vendors for content enforcement and focus on strengthening our internal systems and workforce.

“While we’ll still have people who review content, these systems will be able to take on work that’s better-suited to technology, like repetitive reviews of graphic content or areas where adversarial actors are constantly changing their tactics, such as with illicit drug sales or scams,” it stated.

-

Feature/OPED6 years ago

Feature/OPED6 years agoDavos was Different this year

-

Travel/Tourism10 years ago

Lagos Seals Western Lodge Hotel In Ikorodu

-

Showbiz3 years ago

Showbiz3 years agoEstranged Lover Releases Videos of Empress Njamah Bathing

-

Banking8 years ago

Banking8 years agoSort Codes of GTBank Branches in Nigeria

-

Economy3 years ago

Economy3 years agoSubsidy Removal: CNG at N130 Per Litre Cheaper Than Petrol—IPMAN

-

Banking3 years ago

Banking3 years agoSort Codes of UBA Branches in Nigeria

-

Banking3 years ago

Banking3 years agoFirst Bank Announces Planned Downtime

-

Sports3 years ago

Sports3 years agoHighest Paid Nigerian Footballer – How Much Do Nigerian Footballers Earn