Technology

Trusted AI Needs Human at the Helm

By Linda Saunders

AI promises to make our jobs easier, our work more productive, and our businesses more valuable. New research from Slack finds that 80% of employees using generative AI tools are experiencing a boost in productivity — and that’s just the beginning.

And, with the introduction of AI assistants — including Salesforce’s own Einstein Copilot — the potential for businesses is only growing. AI assistants can already answer questions, generate content, and dynamically automate actions. And someday, these assistants will become digital sales and service agents, anticipating our needs and operating on our behalf.

But with each new AI advancement comes new ethical concerns. It’s one thing if an AI assistant offers a bad product recommendation, but if it takes misguided actions on real-world concerns like personal finances or medical information — the stakes suddenly become much higher.

As we enter this new era of human-AI interaction, how can we harness the power of AI without opening ourselves up to dangerous risks?

Keeping a human at the helm

The AI revolution is an evolution. We’re taking quantum leaps forward every day, but we can’t always explain why AI does the things that it does — or eliminate every instance of inaccuracy, toxicity, or misinformation.

For these reasons, it’s important that we keep humans firmly in control of AI systems. But as AI becomes more and more sophisticated, it can be hard to figure out how to layer in that human touch. We’ve all heard of keeping “humans in the loop,” but with this new generation of AI, it’s sometimes just not realistic for us to engage in every AI interaction or review every AI-generated output.

That’s why, at Salesforce, we believe trusted AI needs a human at the helm. Instead of asking humans to intervene in every individual AI interaction, we’re designing more powerful, system-wide controls that put humans at the helm of AI outcomes and enable them to focus on the high-judgement items that most need their attention. In other words, humans aren’t always rowing the boat — but we’re very much steering the ship.

With a human at the helm, we can design AI systems that leverage the best of human and machine intelligence. For example, we can unlock incredible efficiencies by tasking AI to review and summarise millions of customer profiles. At the same time, we can build trust by empowering humans to lean in and use their judgement in ways that AI can’t.

Making AI a copilot, not an autopilot

There’s a reason this generation of AI products are called copilots and not autopilots. As AI becomes more powerful and autonomous — making decisions and taking actions on individuals’ behalf — keeping a human at the helm becomes even more important. By combining the capabilities of AI with the strength of human judgment, we can make AI more effective and trustworthy.

Here are three ways we’re keeping humans at the helm of Salesforce AI:

-

Prompt Builder Helps Us Automate in Authentic Ways: Prompts, or the instructions we send to generative AI models, are very powerful. A single, human-generated prompt can help guide millions of trusted outputs — but only if it’s constructed thoughtfully. With our newly announced Prompt Builder, we’re helping customers craft effective prompts by seeing the likely output in near real-time to help ensure they get the AI outcome they want. We’ve also added different edit modes within Prompt Builder that allow users to tune and revise their prompts to provide more helpful, accurate, and relevant results.

-

Audit Trails Help Us Spot What We’ve Missed: Our Einstein Trust Layer offers a robust audit trail that allows customers to assess AI’s track record and pinpoint where their AI assistant may have gone wrong and where AI went right. These features help identify issues across large datasets that humans might not spot; and can empower us to use our judgement to make adjustments based on the needs of our organisation. For example, Audit Trail can alert us when an AI tool’s outputs are flagged as “thumbs down” a certain number of times — a sign that the AI-generated outputs might not be meeting the business goals. By aggregating implicit feedback signals, like how often users edit an output before using it, Audit Trail can give us a bird’s eye view of our systems, allowing us to identify trends and take action.

-

Data Controls Help Us Better Guard Our Data: AI is nothing without data. That’s why we’ve designed robust controls in Data Cloud — our fast-growing platform that helps bring siloed customer data together in one place — to help businesses securely action their data. Data Cloud features help organizations harness data for AI-powered insights and intelligence. In contrast, longstanding Salesforce core data controls like permission sets, access controls, and data classification metadata fields empower humans and AI models alike to protect and manage sensitive data.

Pioneering a new approach for the AI era

As the AI era continues to unfold, both humans and technology must evolve along with it. The AI revolution is not just about technological innovation — it’s also about empowering humans to sit successfully at the helm of AI, and use it in ways that are trustworthy and effective.

Our approach is evolving, and we are committed to continued research, learning, and multi-stakeholder collaboration on this topic. But with a human at the helm, we believe we can combine the best of human and machine intelligence for this new AI era — leaning into AI’s capabilities and freeing up humans to do what they do best: be creative, exercise their judgement, and connect more deeply with one another.

With AI and humans working together, we can create more productive businesses, more empowered employees, and ultimately, more trustworthy AI.

Linda Saunders is the Salesforce Director for Solution Engineering Africa

Technology

Nigeria, Finland Strengthen Ties on Digital Economy

By Adedapo Adesanya

The Nigerian government and the Republic of Finland have formalised a strategic partnership on digitalisation and innovation, signing a Memorandum of Understanding (MoU) aimed at expanding economic activities and strengthening cooperation in the digital sector.

The agreement was signed in Abuja by the Minister of Communications, Innovation and Digital Economy, Mr Bosun Tijani, and Mr Jarno Syrjälä, Under‑Secretary of State (International Trade) at Finland’s Ministry for Foreign Affairs.

According to a statement from the Special Assistant on Media and Communications to the communications minister, Mr Isime Esene, the MoU will establish a framework for collaboration across key areas, including digital government, emerging technologies, digital public infrastructure, cybersecurity, innovation ecosystems, and capacity building.

Mr Tijani described the signing as “an important step in strengthening the partnership between both countries as we work to build a more inclusive, innovation-driven digital economy.”

“This agreement is a significant next step following our engagements in Helsinki in February, where we met with key stakeholders, including Finnvera and Finnfund, and held productive discussions on advancing collaboration around digital infrastructure, the Data Exchange Platform, and opportunities for Finnish participation in Project Bridge.”

The Minister emphasised that the partnership would “unlock meaningful opportunities for both countries, enabling us to leverage digital transformation as a catalyst for sustainable growth and shared prosperity.”

Echoing this optimism, Mr Syrjälä said: “Finland is very pleased to deepen its partnership with Nigeria in building resilient, secure, and human‑centric digital societies. Digitalisation is at its best when it empowers people, strengthens trust, and creates new opportunities for innovation.”

“Nigeria is a key partner for Finland in Africa, and this MoU provides a strong basis for concrete cooperation between our governments, institutions, and private sectors. Together, we can advance digital solutions that are interoperable, future‑fit, and beneficial to both our nations,” he added.

Technology

Meta Launches AI Support Assistant on Facebook, Instagram

By Aduragbemi Omiyale

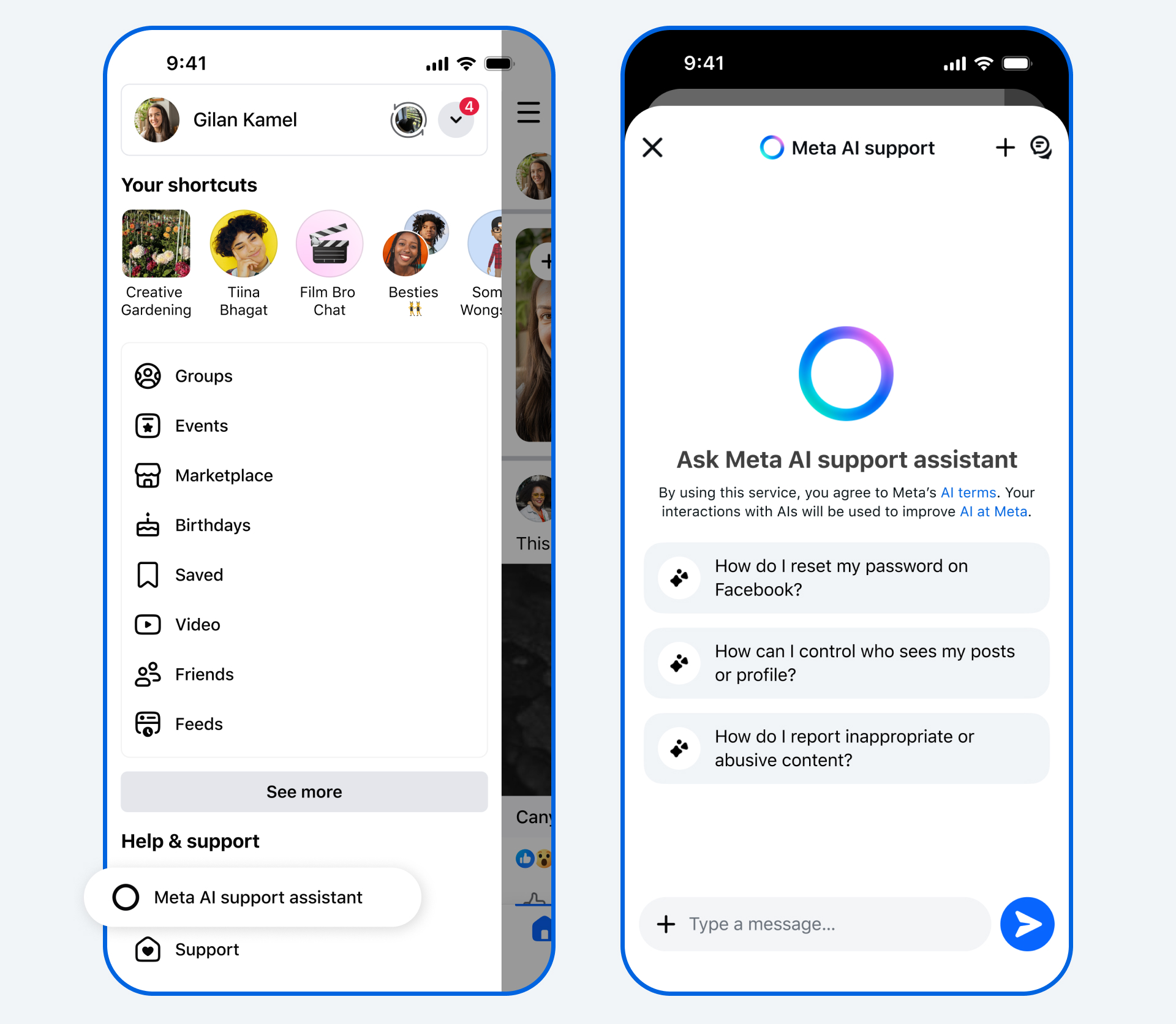

New Artificial Intelligence (AI) tools designed to provide support for users of its applications have been launched by Meta.

The AI Support Assistant will work on the Facebook and Instagram apps, the company said in a statement.

The tools will help users to receive reliable and action-oriented assistance when needed.

In December, the Meta AI support assistant, a tool designed to provide reliable, 24/7 support for nearly any support issue at any time, was previewed.

Now, Meta is rolling it out globally on the Facebook and Instagram apps for iOS and Android, and within Help Centre on Facebook and Instagram on desktop, with even more capabilities and ways to help.

The new Meta AI support assistant is designed to help resolve account problems from start to finish. It offers answers for any question, like notification settings or new features, and can also take action for users on a growing set of requests directly within Facebook and, in the future, on Instagram.

The feature can report scams, impersonation accounts, or problematic content, make it easier to see why content was taken down, provide appeal options, track what happens next, manage privacy settings, reset passwords, and update profile settings.

The Meta AI support assistant can respond to requests typically in under five seconds, dramatically reducing wait times compared to traditional help centre searches or seeking answers on external websites.

“The Meta AI support assistant is a major step in our work to deliver stronger support on our apps. In fact, among people who have provided feedback, the majority report a positive experience with the Meta AI support assistant. It’s rolling out now in all languages supported by Facebook and Instagram for support topics.

“We’re continuing to invest in AI- powered tools to make support more accessible, reliable, and effective — and we’ll keep evolving the Meta AI support assistant as more people use it and as the technology advances, so it continues to improve over time,” the organisation disclosed.

Meta has also deployed AI to improve content enforcement to help users reduce the chance that scammers trick people into giving away their login details, ultimately finding and mitigating 5,000 scam attempts per day that no existing review team had caught before.

Meta said over the next few years, it would be deploying these more advanced AI systems across its apps once they consistently perform better than its current methods of content enforcement, transforming its approach.

“As we do this, we’ll reduce our reliance on third-party vendors for content enforcement and focus on strengthening our internal systems and workforce.

“While we’ll still have people who review content, these systems will be able to take on work that’s better-suited to technology, like repetitive reviews of graphic content or areas where adversarial actors are constantly changing their tactics, such as with illicit drug sales or scams,” it stated.

Technology

Facebook Offers New Tools to Report Impersonation, Removes 20 million Accounts

By Modupe Gbadeyanka

As part of its commitment to celebrating and rewarding creativity, Facebook has updated its guidance, with clear definitions of what counts as original and unoriginal content.

In a message on Monday, the social media platform said it was offering content creators new tools to report impersonation.

Launched last year, the content protection tool is expanding beyond detecting reel matches across Meta platforms to now also flag potential impersonation.

Creators can take action on content theft and easily submit impersonation reports all in one place.

Facebook, in the statement received by Business Post, said creators can check for access to content protection in their professional dashboard or apply for access here.

The platform also disclosed that in 2025, it removed over 20 million accounts impersonating large content creators, and impersonation reports related to large content creators dropped by 33 per cent.

Further, Facebook is deprioritising unoriginal content by making sure they do not perform well on its platform.

It noted that content that is duplicated from other sources or makes low-value changes to someone else’s content may see significantly reduced reach, and accounts that primarily post unoriginal content may lose eligibility for recommendations and monetisation.

It was emphasised that “these changes provide creators who post original content with greater reach and monetisation opportunities, provide stronger protections for their work, and reduce the reach of unoriginal content.”

-

Feature/OPED6 years ago

Feature/OPED6 years agoDavos was Different this year

-

Travel/Tourism10 years ago

Lagos Seals Western Lodge Hotel In Ikorodu

-

Showbiz3 years ago

Showbiz3 years agoEstranged Lover Releases Videos of Empress Njamah Bathing

-

Banking8 years ago

Banking8 years agoSort Codes of GTBank Branches in Nigeria

-

Economy3 years ago

Economy3 years agoSubsidy Removal: CNG at N130 Per Litre Cheaper Than Petrol—IPMAN

-

Banking3 years ago

Banking3 years agoSort Codes of UBA Branches in Nigeria

-

Banking3 years ago

Banking3 years agoFirst Bank Announces Planned Downtime

-

Sports3 years ago

Sports3 years agoHighest Paid Nigerian Footballer – How Much Do Nigerian Footballers Earn